Have you wondered whether OpenClaw, the much-talked-about AI technology, is genuinely revolutionary or just another overhyped product? After all the buzz, some experts in artificial intelligence suggest that OpenClaw might not live up to the grandeur that media and marketing hype promised.

Understanding such claims requires diving into what OpenClaw actually offers, why it sparked curiosity, and where it falls short from a practical AI research point of view. This article takes a closer look, sharing insights from professionals who have seen similar technologies play out in real-world scenarios.

How does OpenClaw position itself in the AI landscape?

OpenClaw has been introduced as an innovative AI platform designed to enhance learning algorithms and streamline AI model deployment. It promises improved efficiency and novel techniques for data processing. Essentially, OpenClaw aims to provide developers with better tools to build smarter AI applications more quickly.

AI research experts, however, caution that the core methods OpenClaw uses aren’t particularly new. Its architecture borrows from existing techniques in the field, meaning it may offer incremental improvements but not groundbreaking innovations.

Why do some experts say OpenClaw isn’t that exciting?

One expert quoted in TechCrunch mentioned, “From an AI research perspective, this is nothing novel.” This reflects a common sentiment in the AI community when a new product repackages known concepts rather than pushing boundaries.

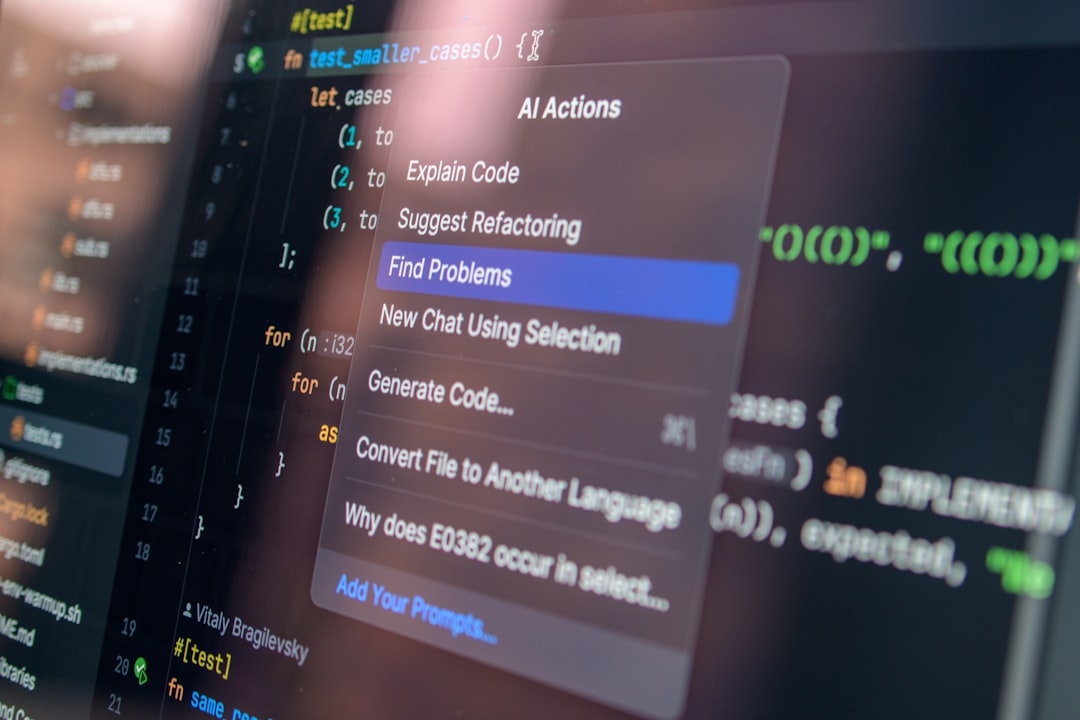

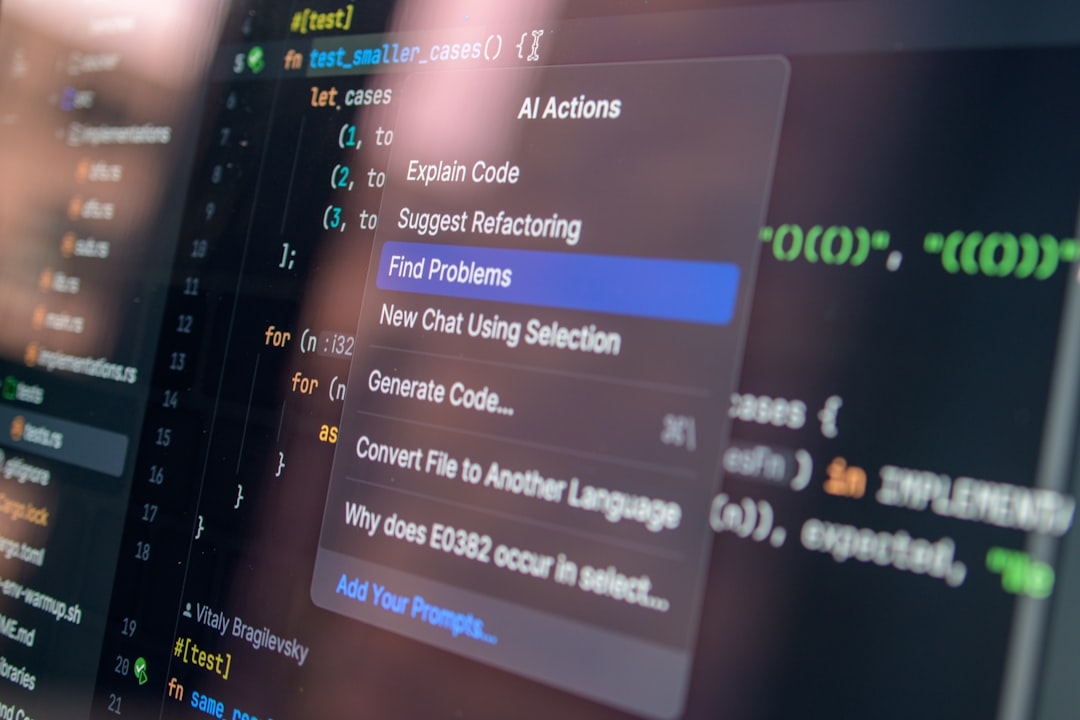

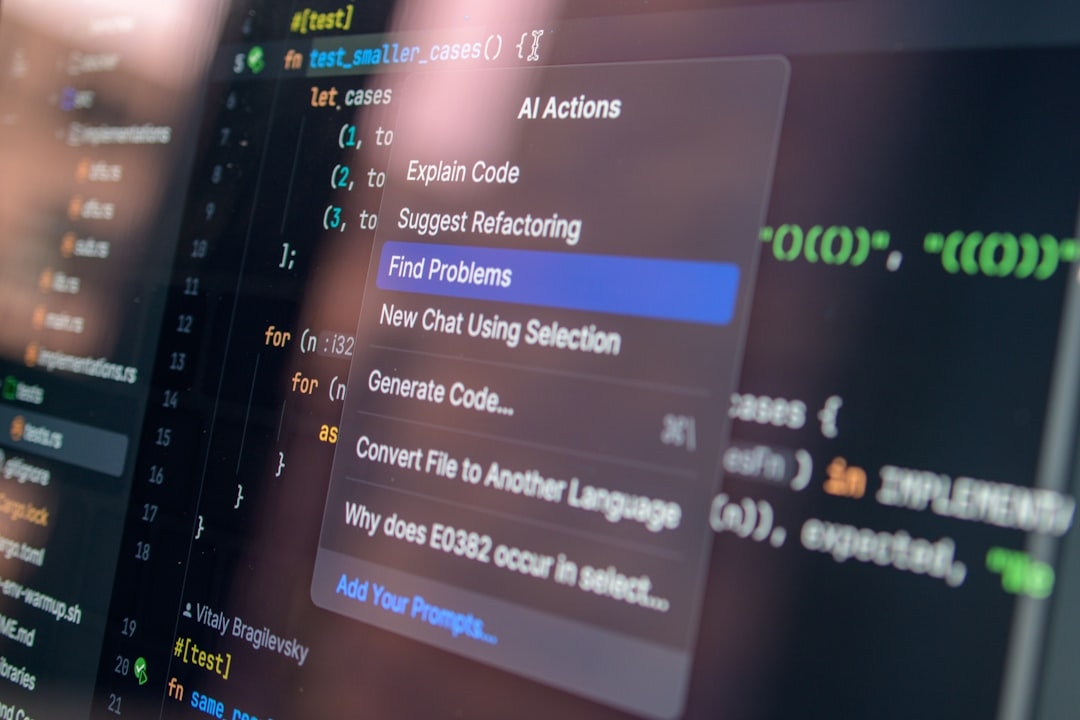

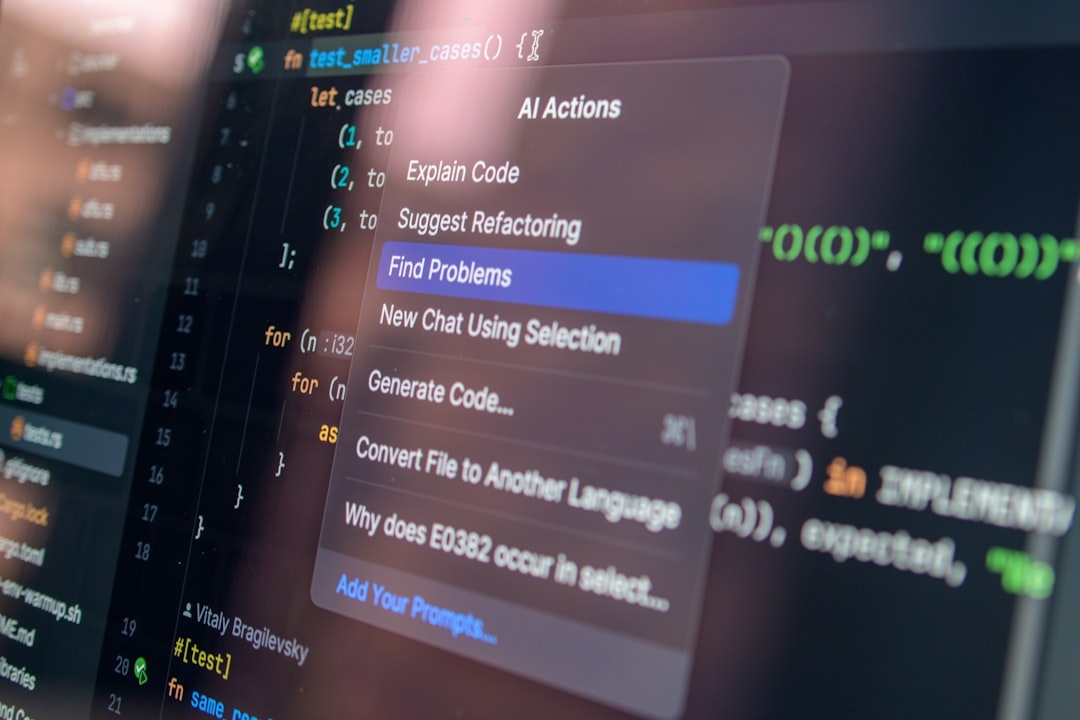

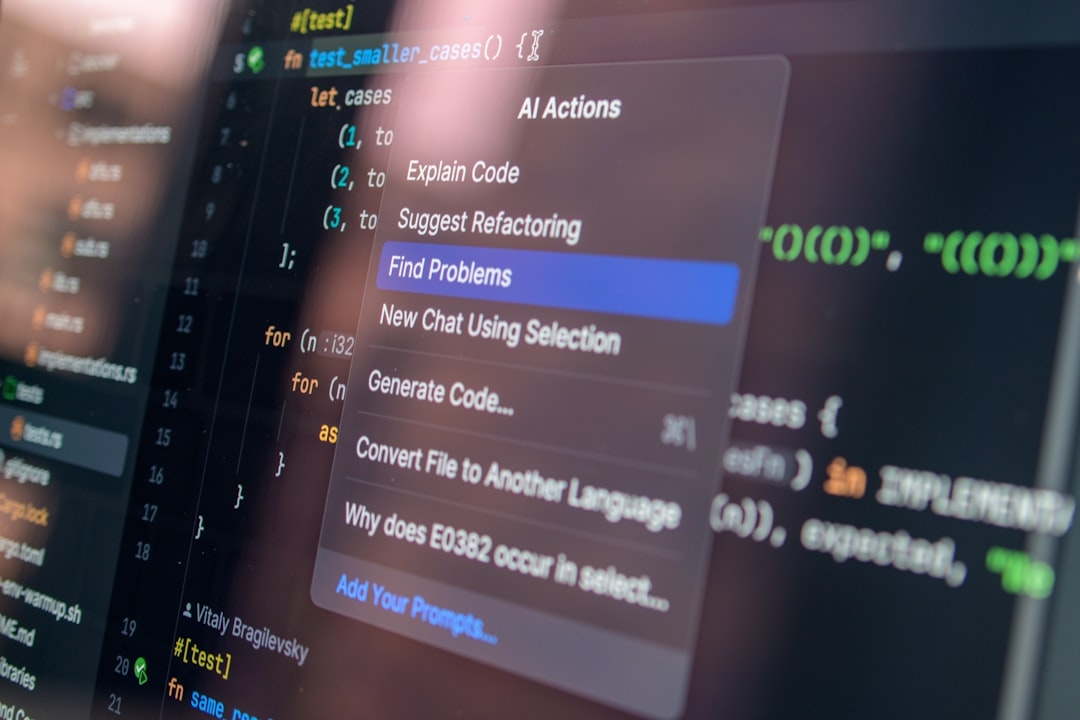

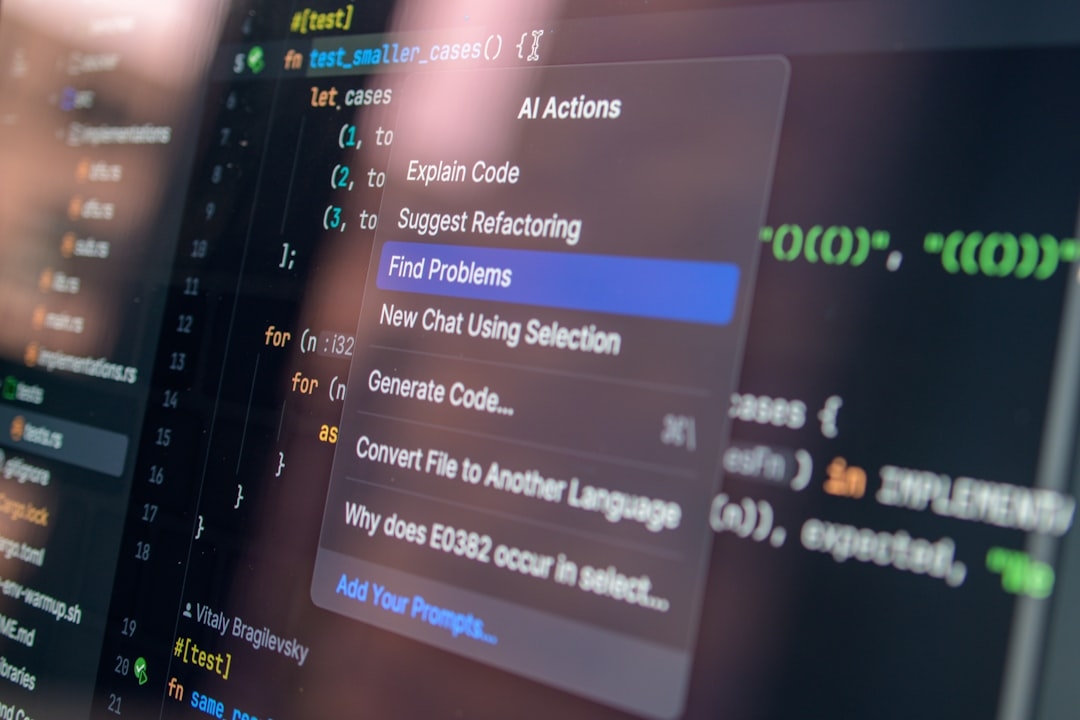

Technically, OpenClaw utilizes standard machine learning frameworks and known optimization algorithms. While it may package these effectively, the underlying principles are well-established. For a researcher or practitioner who has worked with state-of-the-art AI models, the platform’s advances might feel incremental at best.

Understanding the Technology Behind OpenClaw

At its core, OpenClaw builds upon machine learning—an AI branch where systems learn patterns from data without explicit programming for every task. It integrates familiar components such as neural networks and gradient optimization. However, it does not introduce fundamentally new algorithms or mathematical frameworks that would represent a paradigm shift in AI research.

This reality doesn’t diminish its potential value to developers who seek streamlined deployment tools. Yet from a research innovation standpoint, OpenClaw’s contribution is modest.

What practical challenges should you consider with OpenClaw?

Before adopting OpenClaw, consider these practical constraints: time investment, cost, and compatibility. Implementing new AI platforms requires training teams and adjusting workflows, which can add overhead and delay project timelines.

- Time: Integrating OpenClaw into established AI pipelines could demand weeks of adaptation and testing.

- Cost: Licensing fees or infrastructure changes could escalate expenses unexpectedly.

- Risks: Your AI models’ performance may not significantly improve, questioning the return on investment.

- Constraints: Some legacy systems might not be compatible with OpenClaw’s architecture, limiting deployment options.

While such factors might not deter teams eager for the latest tools, they underscore why cautious evaluation is essential.

How can you evaluate OpenClaw’s value for your AI projects?

Instead of relying on hype, establish clear criteria to judge OpenClaw’s fit:

- Performance gains: Does it measurably enhance your model’s accuracy or efficiency?

- Integration ease: How smoothly can it mesh with your current systems?

- Support and documentation: Does the vendor provide adequate resources to troubleshoot and optimize usage?

- Community and ecosystem: Is there a vibrant user base to share experiences and best practices?

Evaluating these points will clarify whether OpenClaw justifies the switching costs.

Practical Considerations When Testing OpenClaw

Drawing from past experience, new AI tools often underdeliver expectations during the initial rollout. Real-world deployments reveal unforeseen bugs, scalability issues, or complex edge cases that weren’t obvious in demos.

Allocate time for pilot projects that limit exposure to critical systems. This approach helps you gather data-driven feedback without jeopardizing key operations. Keep in mind that marketing materials frequently emphasize ideal conditions rather than daily challenges encountered by developers.

When should you consider alternative AI solutions?

If OpenClaw’s benefits don’t clearly outweigh the integration efforts and costs, it may be smarter to leverage established frameworks with broad community support. These often provide more mature tooling, extensive examples, and proven reliability for production AI.

What does this mean for AI innovation overall?

OpenClaw’s reception highlights an important dynamic in AI productization: innovation isn’t just about technology novelty but also execution, accessibility, and real user impact. Solutions that genuinely improve AI workflows require rigorous vetting beyond flashy announcements.

In this case, the technology’s lack of novelty from a research perspective suggests a need for tempered expectations. Still, OpenClaw could prove useful for specific use cases where integration efficiency matters more than algorithmic breakthroughs.

How to quickly assess new AI tools like OpenClaw?

Here is a quick framework you can apply in 10-20 minutes to decide if new AI software is worth exploring further:

- Identify core claims: What specific improvements does the tool promise?

- Match claims to needs: Align these with your project goals and pain points.

- Verify novelty: Do these features represent new capabilities or repackaged existing ones?

- Research support: Check active developer communities and real user reviews.

- Estimate implementation effort: Consider training, integration, and potential downtime.

Applying such a checklist avoids hasty commitments based on marketing hype and focuses on concrete benefits.

Ultimately, OpenClaw's story reminds AI practitioners to approach new technologies critically, balancing optimism with an understanding of real-world trade-offs.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us