Why More Compute Isn't Always the Answer in Reinforcement Learning

When it comes to reinforcement learning (RL) applied to complex games like StarCraft II, many assume that simply increasing computational power will yield better results. But that’s not the whole story. The full StarCraft II game features an enormous state-action space, meaning there are countless possible game situations and corresponding player decisions. This vastness makes reward signals—feedback that tells an AI how well it's doing—extremely sparse and difficult to interpret.

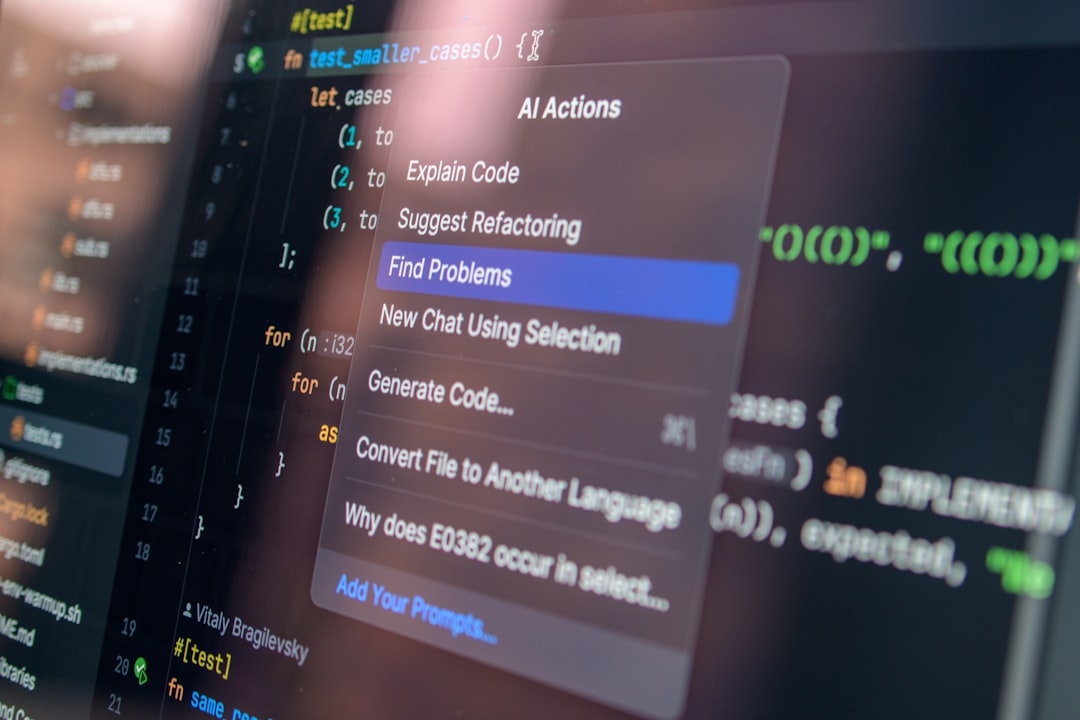

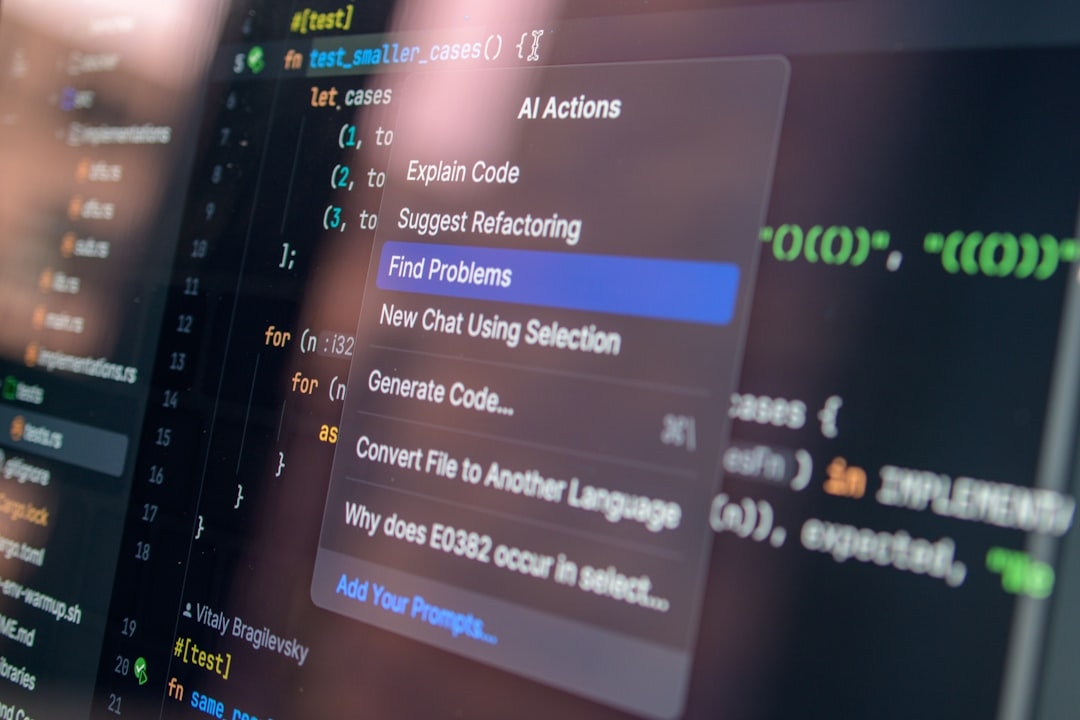

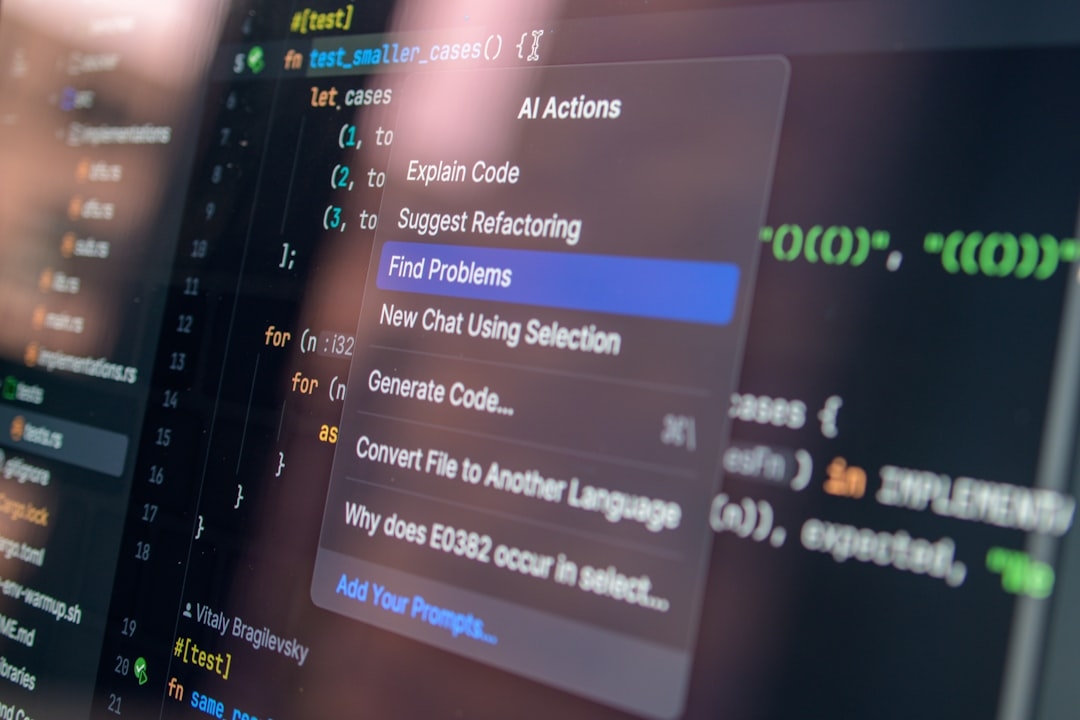

This challenge creates a barrier for researchers who want to experiment with reinforcement learning at a practical level without access to massive compute clusters or specialized hardware. Recognizing this, a new open-source benchmark focusing on a mid-level challenge in StarCraft II has been introduced, aiming to fill the gap between the full game and simple mini-games.

What Is This New StarCraft II Benchmark?

The benchmark offers a stand-alone environment that allows reinforcement learning experiments on a manageable version of StarCraft II. It’s open-source, meaning anyone can access and use it freely, which is a huge plus for democratizing RL research.

Unlike the full game, which is overwhelming due to its complex decision layers and huge action space, or the mini-games, which are too simplistic and not representative of real in-game challenges, this benchmark strikes a balance. It narrows down the state-action space while keeping meaningful complexity, making it a practical testbed for reinforcement learning algorithms.

Why Does This Matter?

- Accessibility: Researchers without access to vast computing resources can still test advanced RL strategies.

- Focused Learning: The environment puts the spotlight on strategic decisions rather than brute-force computation.

- Community Collaboration: Being open-source encourages iterative improvements and shared benchmarks across teams.

How Does This Benchmark Actually Work?

The environment provides a scaled-down version of StarCraft II where the state-action complexity is controlled. In reinforcement learning, the state space represents all possible configurations of the game environment, while the action space refers to all possible actions the agent can take at each state.

By reducing these spaces, the reward signals become less sparse—meaning the AI can get feedback more often and learn faster. This setup makes it easier to analyze and improve different reinforcement learning strategies without the noise and complexity that the full game introduces.

When Should You Consider Using This Benchmark?

This benchmark is ideal for researchers and developers focused on:

- Testing new reinforcement learning algorithms without access to massive compute resources.

- Experiencing a realistic yet manageable AI environment that goes beyond toy problems.

- Benchmarking strategies in a standardized setting for community comparison.

But be aware: it’s not a substitute for full-scale StarCraft II training if your goal is to mimic actual gameplay performance at the highest level.

When NOT to Use the Benchmark?

If you're looking to train an AI agent that needs to handle the complete complexity and unpredictability of the full StarCraft II game, this benchmark falls short. It intentionally limits the state-action space to keep research accessible, which means it lacks some nuanced features of the full game. For final-stage development or real-world competition-level performance, the full game remains necessary despite its challenges.

Are There Alternatives Worth Exploring?

Aside from this middle-ground benchmark, researchers often work with:

- StarCraft II Mini-Games: Very simple tasks targeting specific game mechanics, good for testing basic RL algorithms but not representative of full game strategy.

- Full StarCraft II Environment: Offers the most complete challenge but demands intense computational resources and can be a black box for researchers.

- Other Game Benchmarks: Like OpenAI Gym or custom RL environments tailored for accessibility and diverse challenges.

What Have We Learned About Scaling Strategy Over Compute?

The key takeaway is that scaling the compute—throwing more processing power at the problem—isn't always efficient or feasible. Instead, focusing on the strategic design of the environment and the learning problem itself can lead to more meaningful advancements in reinforcement learning research.

Accessible benchmarks like this one help researchers experiment faster and with fewer resources, accelerating progress in RL without getting bogged down by infrastructure costs.

Practical Tip: Try It Yourself

To test your understanding, download the benchmark environment (check the latest version from official sources) and try training a simple reinforcement learning agent. Track how quickly it learns compared to the full game setup or mini-games. This simple experiment will help you grasp how controlling the state-action space impacts learning speed and resource requirements.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us