Securing AI agents in critical business operations is no longer optional — it's a necessity. Just like a pilot runs pre-flight checks before takeoff, organizations must validate their AI systems before deployment. OpenAI's recent acquisition of Promptfoo underscores this urgency, showcasing how leading labs are racing to prove their AI can be trusted in real-world conditions.

This article reflects first-hand observations from deploying AI at scale, unpacking what works and what doesn’t when securing AI agents, particularly in high-stakes environments.

What is Promptfoo and Why Does OpenAI's Acquisition Matter?

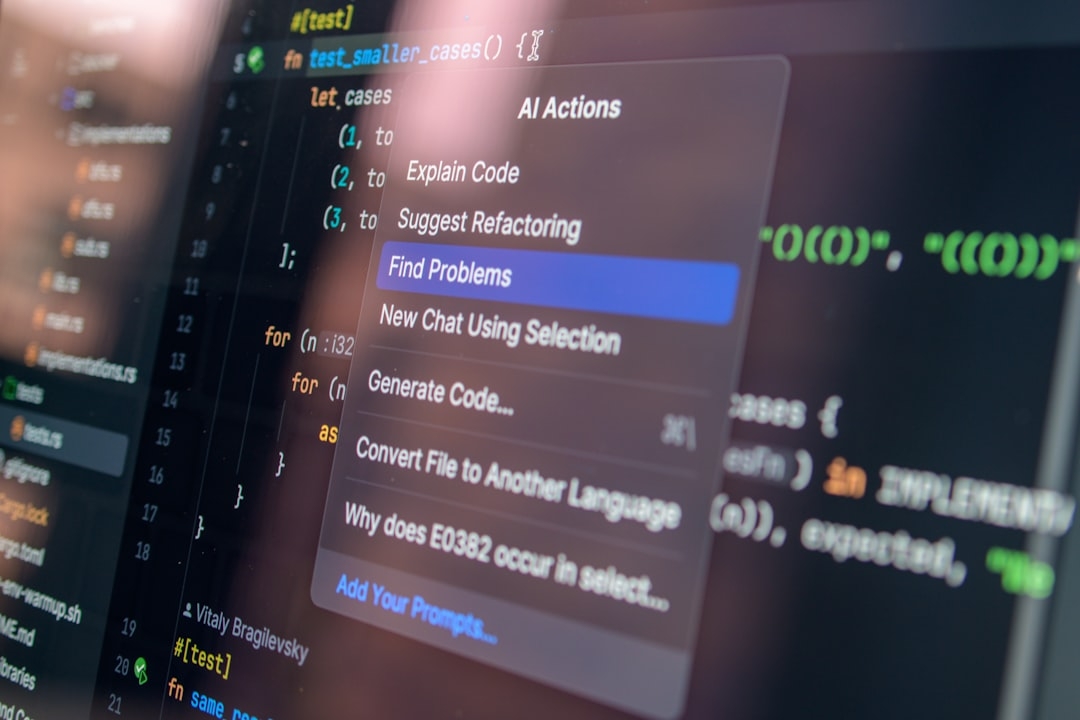

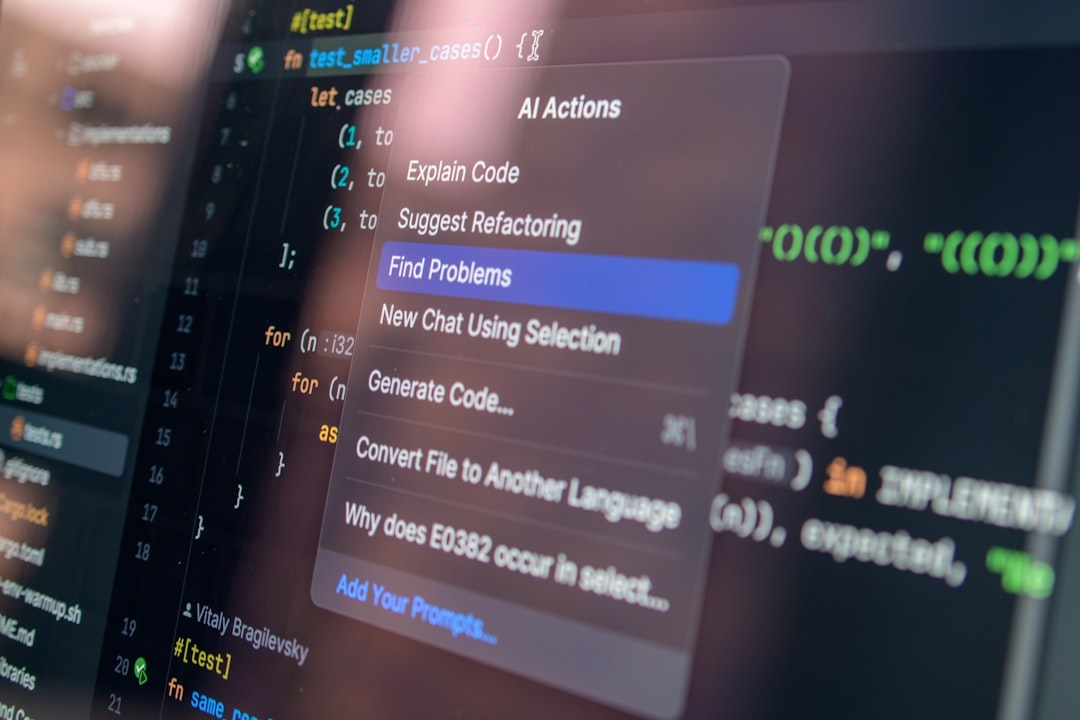

Promptfoo is a specialized tool designed to test, monitor, and secure AI agents during use. AI agents are applications that perform tasks like customer support, data analysis, or content generation autonomously. Securing them means ensuring they behave as expected, avoid harmful outputs, and operate reliably under varied conditions.

OpenAI acquiring Promptfoo signals the recognition that even frontier lab technologies need robust validation pipelines before being integrated into business workflows. No matter how advanced the AI, without rigorous testing, errors can compound, leading to costly failures.

How Does AI Agent Security Affect Business Operations?

Misbehaving AI agents can cause data leaks, compliance breaches, or misinformation spreading — especially when AI is deployed without safeguards. Securing these agents involves continuous testing against edge cases, monitoring real-time behavior, and systematically reducing risks.

This is similar to QA in software engineering but tailored to AI’s unpredictability. Typical approaches like manual reviews or rule-based scripts often fall short because AI can generate novel outputs unexpected by developers.

Challenges Companies Often Face

- Oversimplified Testing: Basic tests target ideal scenarios, missing rare but risky failures.

- Lack of Continuous Monitoring: AI drift over time is overlooked, degrading performance unnoticed.

- Heavy Manual Oversight: Human review becomes a bottleneck, especially at scale.

What Does Promptfoo Do Differently?

Promptfoo automates rigorous testing across AI agents’ lifecycles. It builds customized test suites that simulate diverse user inputs, measuring AI responses against predefined safety and correctness criteria.

By doing this, businesses gain confidence their AI agents won’t produce surprising or unsafe behaviors once in production. Its integration with OpenAI enables tighter alignment between model capabilities and operational safety needs.

When Should You Use AI Agent Testing Tools Like Promptfoo?

Implement testing tools once your AI agent moves beyond prototype into customer-facing or mission-critical applications. At this phase, failures have financial, reputational, and legal implications.

Testing becomes indispensable to: detect unsafe outputs, enforce compliance, and maintain consistent quality.

Practical Considerations: Time, Cost, and Risks

Introducing AI testing frameworks requires upfront effort in writing test cases and configuring monitoring. Expect a ramp-up of several weeks depending on complexity.

Cost wise, these tools add operational expenses but mitigate risks that can produce far larger hidden losses. Companies must weigh trade-offs:

- Setup time and engineering bandwidth

- Regular maintenance of test suites

- Potential delays deploying new AI features versus ensuring reliability

Ignoring these leads to far riskier, unmonitored AI rollouts, which may go unnoticed until damage is done.

What Finally Worked: Lessons from Production Experience

From hands-on attempts, we learned that security testing must become an intrinsic part of AI deployment culture — not a one-off checklist.

Key success factors:

- Integrate testing early and continuously during development

- Automate failure detection instead of manual spot checks

- Design tests representing realistic and adversarial inputs

- Include business stakeholders in defining what “safe” means

We also discovered that popular assumptions like “pretrained models are ready out of the box” often lead to unexpected failures. AI agents need continual validation as conditions and data evolve.

How Can You Quickly Evaluate If Your AI Agent Is Secure?

A quick framework you can apply in 15-20 minutes:

- List your AI agent’s core tasks and outputs

- Identify potential failure modes (e.g., incorrect data, harmful language, compliance issues)

- Review existing tests or monitoring for these failures

- Assess gaps: which risks lack automated checks?

- Prioritize adding targeted test cases or monitoring rolls

This simple audit helps determine immediate weaknesses and guides resource allocation for AI safety improvements.

Key Takeaways

- AI agent security is a pressing business need as these systems become mission-critical.

- OpenAI’s acquisition of Promptfoo demonstrates industry leadership embracing rigorous testing and monitoring.

- Popular simplistic testing strategies are insufficient; continuous and automated validation is essential.

- There are upfront costs and time investments, but the risk mitigation benefits justify them.

- Organizations should conduct quick evaluations regularly to stay ahead of AI agent risks.

Securing AI agents isn’t about perfect systems — it’s about managing trade-offs pragmatically. OpenAI and Promptfoo’s combined efforts are steps toward safer AI deployments in the real world.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us