When working on building scalable AI services like Codex and Sora, one challenge quickly becomes clear: how do you manage user access beyond simple rate limits without sacrificing performance or user experience? My team at OpenAI encountered this firsthand, and what we developed evolved into a hybrid system that balances rate limiting with usage tracking and a credit system for continuous, real-time access.

This article walks you through what this system really is, how it actually works, and answers common questions about scaling AI service access while avoiding common pitfalls.

What Does It Mean to Go Beyond Rate Limits?

Most API services use rate limits as a blunt instrument. They restrict how many requests a user can make within a certain time window. While this approach is straightforward, it often results in frustrating user experiences. For instance, if you're building on top of Codex or Sora and hit a rate limit, your app might freeze, or users will have to wait unnecessarily.

OpenAI’s approach involved combining traditional rate limiting with usage tracking and a credit-based system. This means you don’t just count requests by the minute or second; instead, you monitor how much resource each user consumes, attributing credits based on usage patterns. This enables sustained access as long as users have credits available, effectively smoothing out access peaks and avoid abrupt cutoff points.

How Does OpenAI’s Real-Time Access System Work?

The backbone of this system is a dynamic interplay between three components: rate limiting, continuous usage tracking, and a credit allocation mechanism.

Rate Limiting as a Baseline

Rate limiting still plays an important part. It sets the initial boundaries to prevent overloading the system. This baseline prevents spiky user behaviors from flooding backend resources.

Tracking Usage in Real-Time

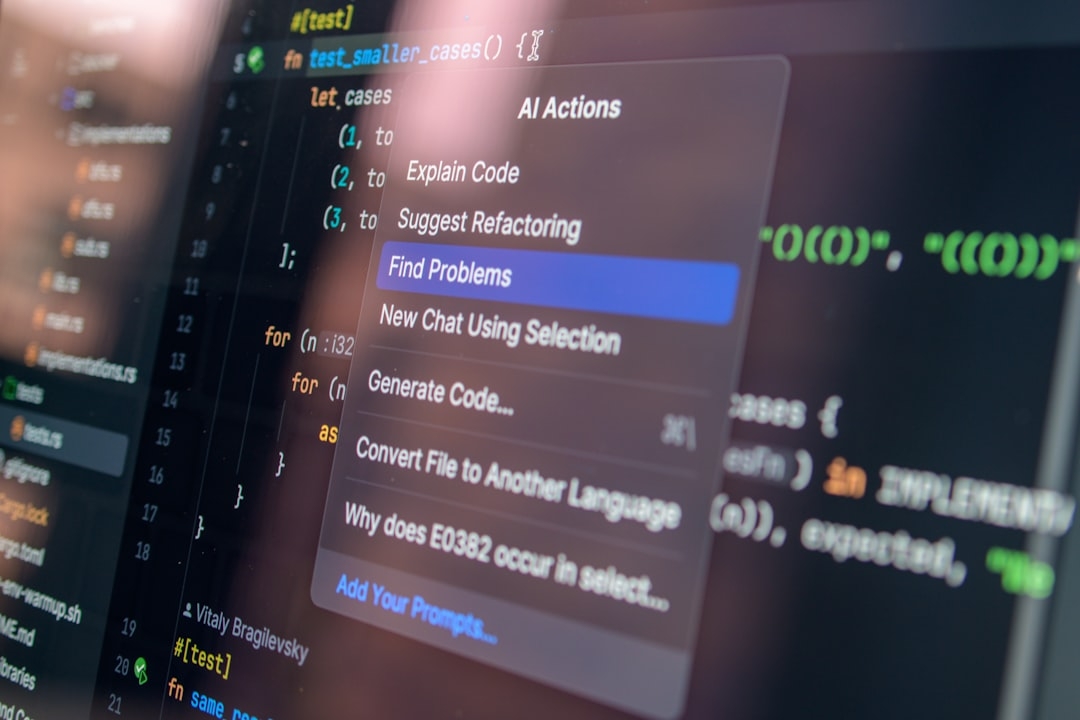

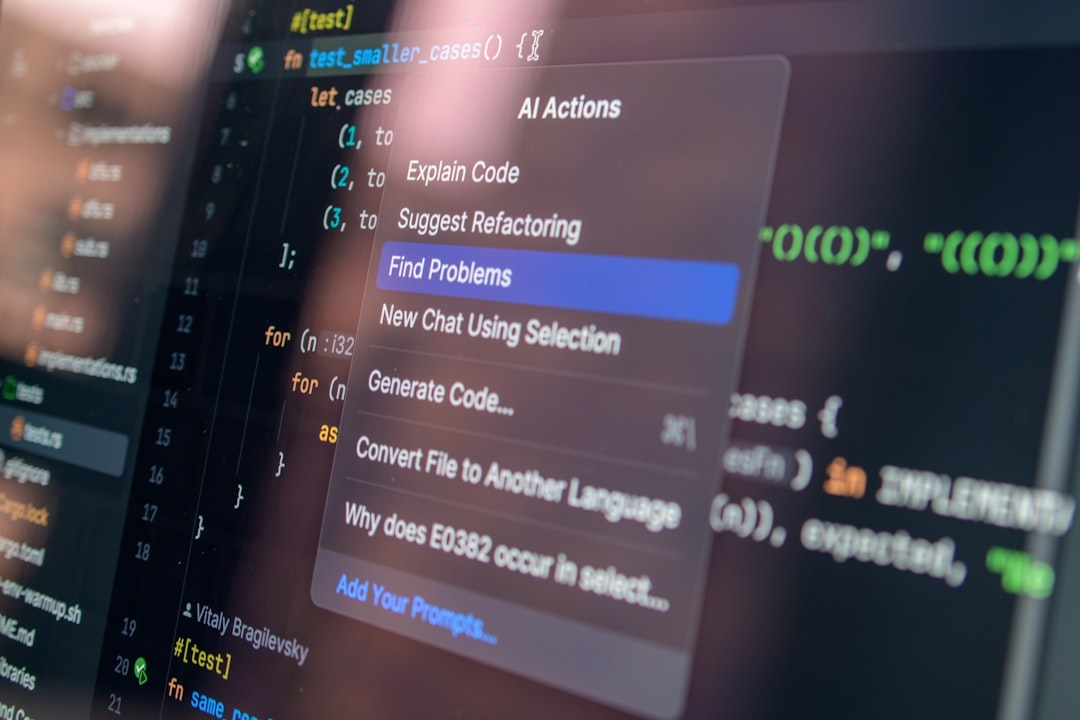

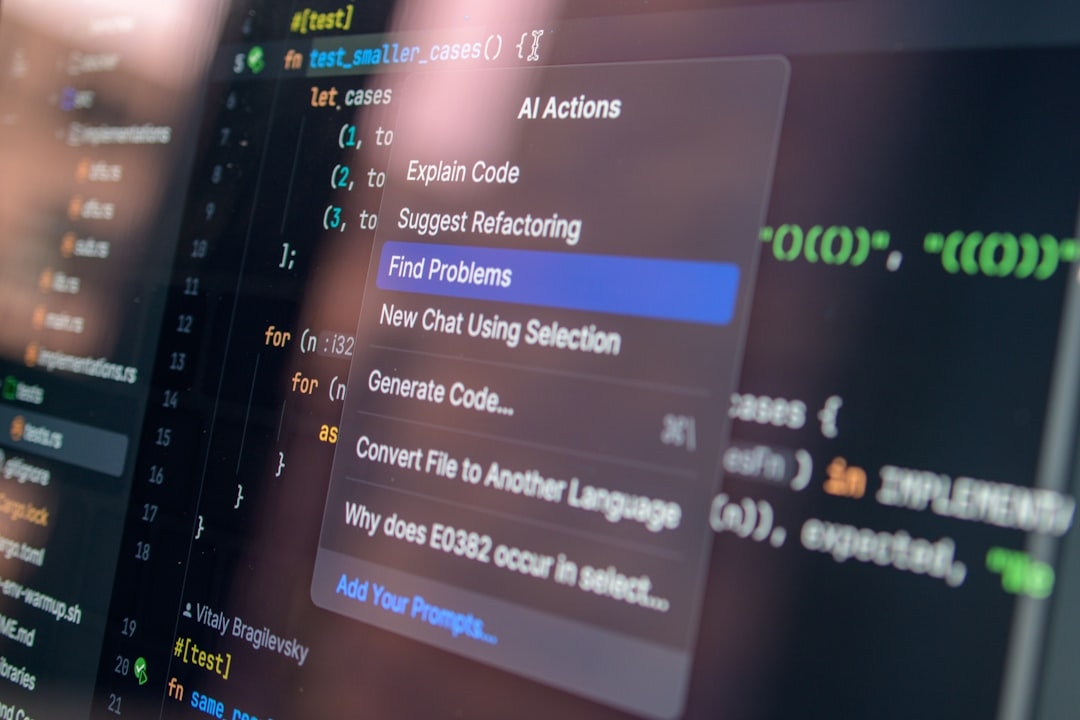

Instead of simply counting requests, OpenAI tracks the consumption of tokens or computational resources each request uses. This gives a granular view of how intensive each request is, allowing smarter management than just counting the number of API calls.

Usage tracking involves real-time monitoring systems that record resource usage per user session. This data is essential for adjusting limits and credit balances dynamically.

Credit-Based Continuous Access

Credits act like a virtual currency representing user allowance. Instead of a hard stop at a fixed limit, users can continue making requests as long as they have sufficient credits. These credits refresh or reallocate based on subscription tiers or policy criteria, enabling users to sustain access without sudden interruptions.

This system functions much like a prepaid card: as long as you have balance, you can keep spending. When you run low, usage slows or temporarily pauses, but without abrupt cutoffs.

Why Not Rely Only on One Approach?

While pure rate limiting is easy to implement, it’s inflexible for advanced use cases where user demand varies drastically. Usage tracking alone requires significant infrastructure and can be slow to react without rate limits as a safety net. A credit system alone may result in resource abuse if not paired with baseline controls.

By combining all three, OpenAI found a balance that is robust, scalable, and much more user-friendly.

What Are Some Common Misconceptions About This System?

“Isn’t rate limiting enough?”

Not really. Rate limits treat each request equally, but one Codex request may need far more compute resources than another simple query. Rate limiting can punish users unfairly.

“Does usage tracking introduce latency?”

While tracking usage in real time is resource-intensive, OpenAI uses highly optimized telemetry pipelines and caching layers to minimize delays and keep performance smooth.

“Does the credit system encourage overuse?”

Credits are carefully managed and tied to subscription models or user tiers. This ensures users pay for what they consume and helps prevent abuse.

When Should You Use This System?

If you’re running AI or API services where users have highly variable access patterns, this layered access control approach is valuable. It’s especially useful when:

- You want to provide seamless, uninterrupted access during usage spikes

- You need fine-grained control over resource usage per user

- You want to avoid frustrating “rate limit exceeded” errors that disrupt workflows

When NOT to Use the System

If your use case involves very predictable, low-volume access, or if you serve a small number of uniform users, the added complexity may not be worth it. In simpler scenarios, traditional rate limiting often suffices.

What Can You Learn From Implementing This?

In production, this system exposed some trade-offs:

- Monitoring and usage tracking require robust telemetry architecture — this often means integration with scalable logging and analytics platforms

- Credit allocation policies need careful tuning based on user behavior and business goals

- Balancing responsiveness with fairness is a continual adjustment; no system is perfect

Still, the benefits were clear: significantly improved user experience, optimized system resource utilization, and greater flexibility during demand surges.

Try This Next: Simulate Hybrid Access Control

Set up a small-scale experiment with an API service you control. Implement basic rate limiting combined with a credit-based tracking system, where credits are spent per request based on complexity (e.g., request size or expected processing time). Monitor user experience under varying loads and see how your system handles bursts compared to pure rate limiting.

In 10-30 minutes, you can run load tests and observe how combining usage tracking with credits provides smoother control over access than blunt rate limits.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us