How sustainable is it for the US military to keep relying on Anthropic’s Claude AI models when many defense-technology customers are backing away? This question has become urgent as the US intensifies its aerial operations targeting Iran, involving AI decision-making at critical points.

Behind the headlines lies a complex balance between cutting-edge AI capabilities and the practical constraints of defense applications, which demand absolute reliability and precision. This article unpacks the factors driving continued use by the US military despite notable client departures in the defense sector.

What Makes Claude AI Critical for Military Targeting Decisions?

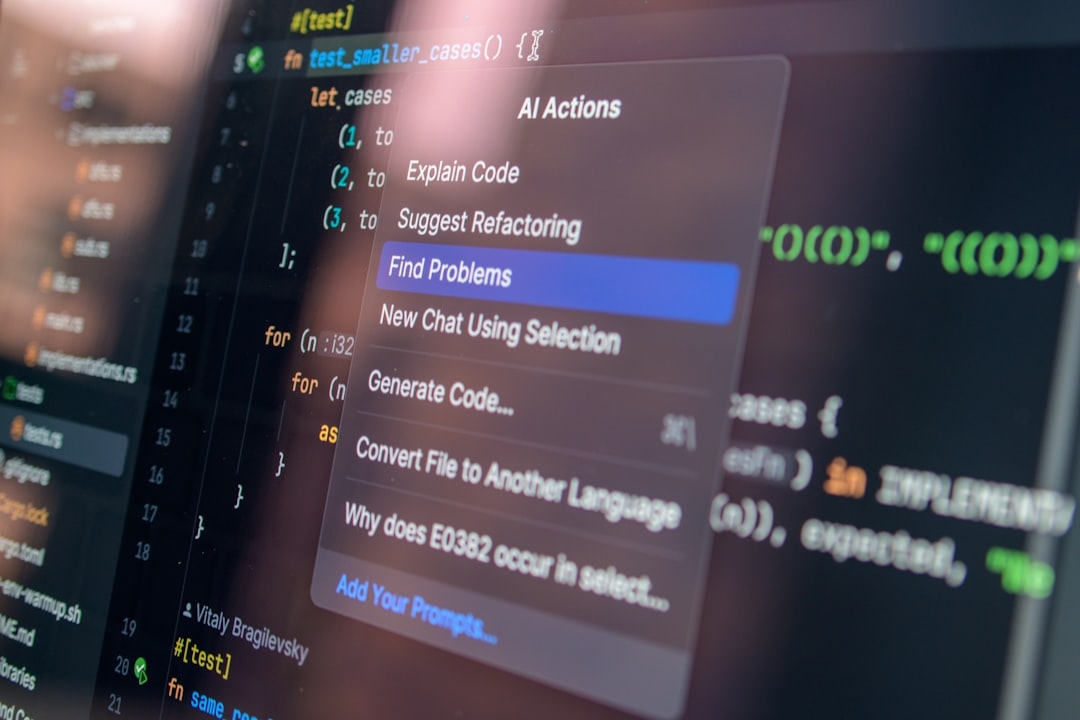

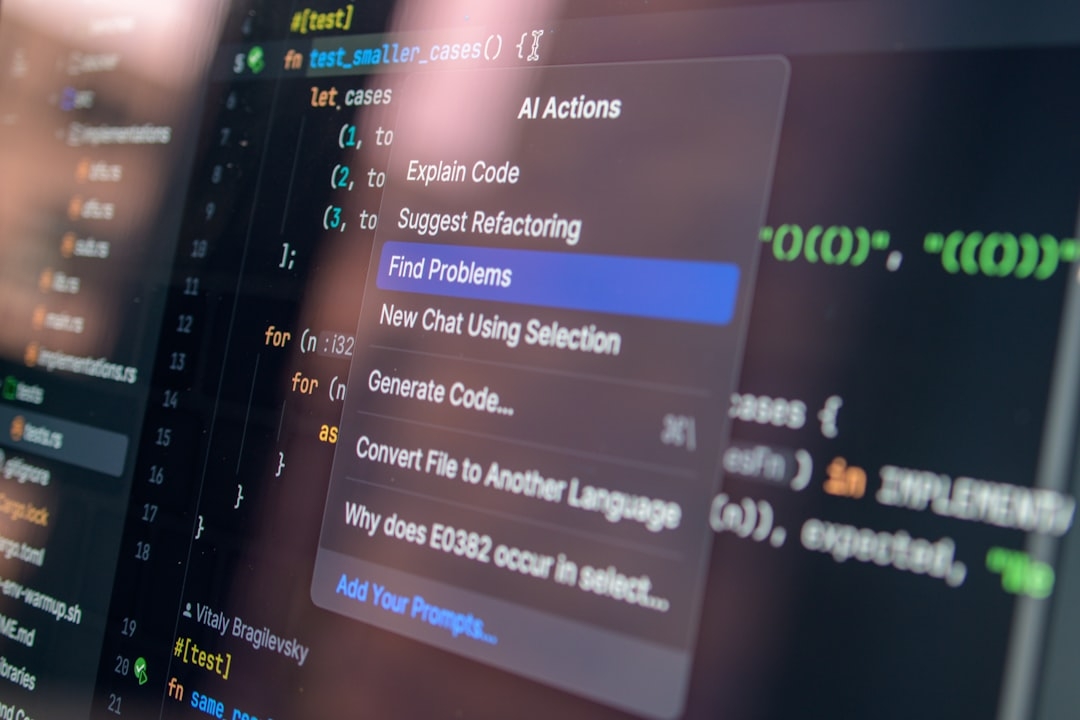

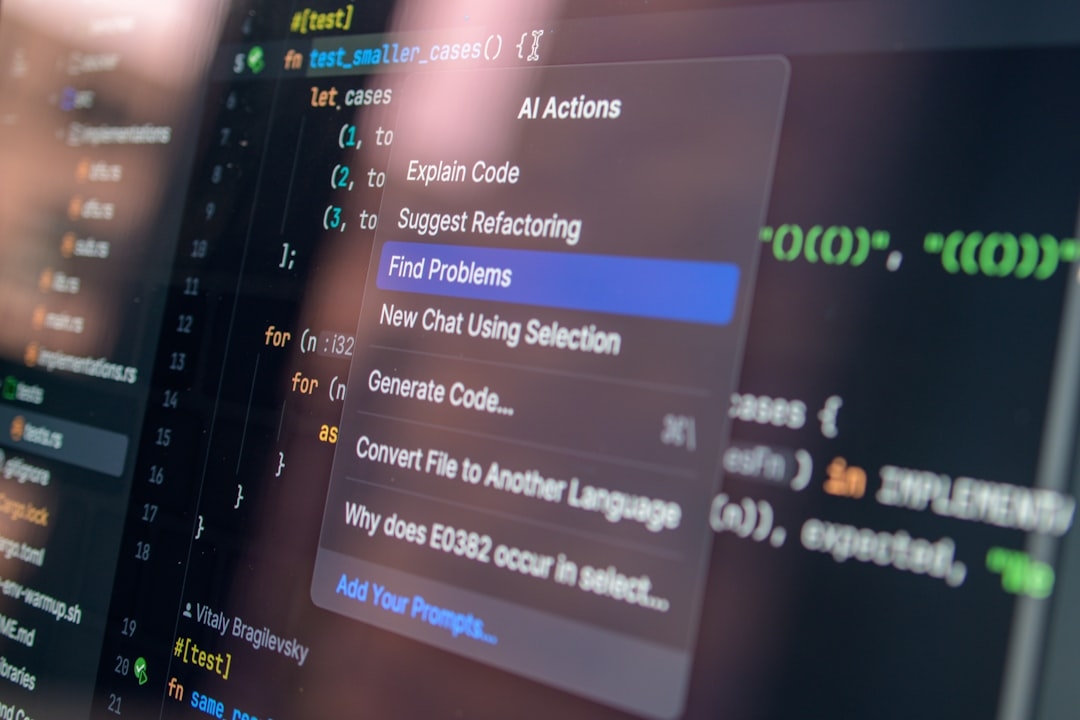

Claude, developed by Anthropic, is an advanced large language model (LLM) designed to assist complex reasoning tasks. In military contexts, AI systems like Claude are harnessed to sift through massive data streams and suggest potential targets based on defined criteria and threat profiles.

Military operations rely on AI models to accelerate decisions that traditionally take human analysts much longer. While Claude is not automating strikes outright, it supports targeting decisions by ranking and contextualizing intelligence—speeding up the kill chain to respond swiftly in a dynamic combat environment.

How Does Claude Work in These Defense Scenarios?

Anthropic’s models use billions of parameters trained on diverse datasets, enabling them to generate human-like understanding and reasoning. Claude’s architecture specializes in following safety-oriented instructions and reducing harmful outputs through careful design.

In practice, Claude parses textual intelligence reports, radar feeds, and signals, helping operators prioritize targets that meet strict engagement rules. However, this use exposes the AI to high stakes where errors can have severe consequences.

Why are Defense-Tech Clients Fleeing While the US Military Holds On?

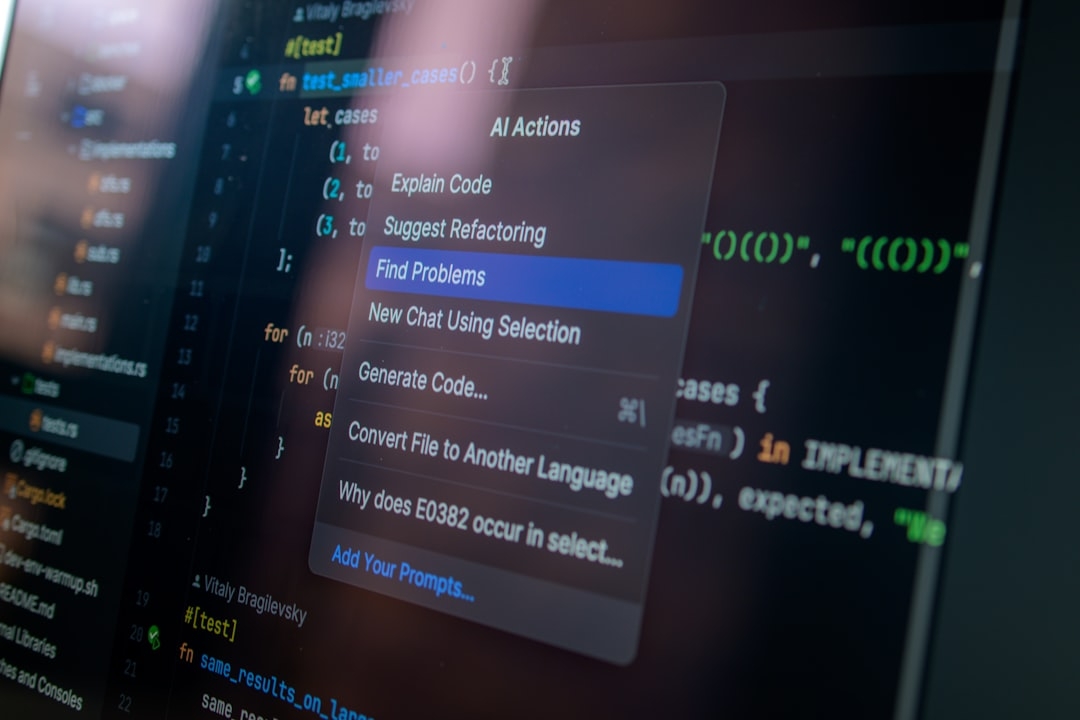

Several defense contractors and clients have distanced themselves from Anthropic’s solutions, citing operational challenges. These issues include latency, reliability under load, and predictability of output—factors critical when milliseconds and accuracy translate into mission success.

Reliability trade-offs often arise because Claude, like other LLMs, generates probabilistic outputs rather than deterministic responses. This can complicate trust in real-time military operations where second opinions or fallback measures are limited.

What are the Practical Limitations in Production Environments?

Many users report that while Claude offers impressive reasoning abilities, it occasionally produces ambiguous or inconsistent answers. In civilian settings, this is manageable; in military targeting, it is a liability.

Additionally, Anthropic’s server infrastructure sometimes struggles to meet demanding uptime and latency SLAs (service-level agreements), especially during surges in battlefield data volume. These performance issues prompt clients to look for alternatives with hardened guarantees.

How Has the US Military Adapted to These Issues?

Despite client attrition, the US military continues to deploy Claude because it combines a uniquely strong safety framework with advanced language capabilities. Their teams incorporate multiple redundancy layers and human-in-the-loop processes to mitigate AI uncertainty.

Integrating Claude requires extensive operational validation, ongoing tuning, and contingency planning to handle AI unpredictability without compromising mission goals.

What Are the Real-World Benchmarks of Claude's Performance?

- Speed: Claude handles rapid data throughput, but occasional lag exists under peak loads.

- Decision Accuracy: High-quality recommendations in structured scenarios, though edge cases reveal vulnerabilities.

- Safety: Strong guardrails reduce harmful suggestions, essential in avoiding collateral damage.

Nevertheless, these strengths are offset by the reality that no AI is infallible, especially under evolving battlefield conditions.

What Are the Key Constraints When Considering Claude for Defense AI?

Understanding the trade-offs involves recognizing:

- Probabilistic Nature: AI outputs suggest likelihoods, not certainties, requiring human judgment.

- Infrastructure Dependence: Performance tied to network and data center availability.

- Safety and Compliance: Continuous alignment with rules of engagement and international law.

- Interpretability: AI decisions need to be explainable for accountability.

These factors create a delicate environment where AI is a force multiplier but not a standalone solution.

When Should Defense Teams Consider Alternative Models?

Speed, reliability, and explainability benchmarks must be carefully evaluated. If Claude’s current iteration does not meet operational SLAs or produces inconsistent outputs, teams should explore:

- Hybrid systems combining symbolic AI and machine learning

- Custom models tailored for specific defense domains

- Enhanced human oversight protocols

This caution stems from lessons learned when deploying AI solutions prematurely in mission-critical contexts.

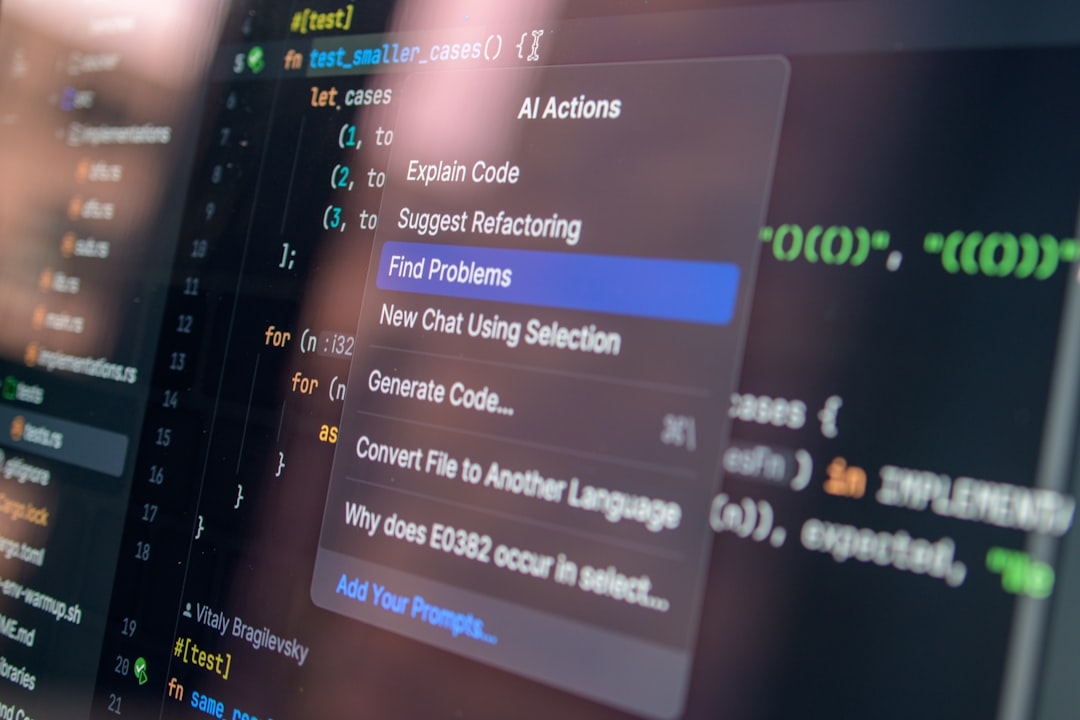

How Can Military Operators Quickly Evaluate Claude or Similar AI Models for Their Use Case?

Teams can apply a structured evaluation framework within 10-20 minutes to assess feasibility:

- Define Requirements: List latency, accuracy, safety, and interpretability thresholds specific to your mission.

- Test in Simulated Environments: Run typical targeting workflows with representative data.

- Analyze Failure Modes: Identify output inconsistencies and latency spikes.

- Assess Infrastructure: Confirm performance under anticipated load conditions.

- Review Human-AI Interaction: Ensure operators can override or audit AI decisions effectively.

This approach emphasizes a pragmatic, data-driven decision rather than relying on technology hype.

Summary and Outlook

The US military’s continued use of Anthropic’s Claude demonstrates a cautious but firm commitment to leveraging advanced AI despite evident challenges. Its blend of reasoning power and safety features remains unmatched in some niches.

However, the flight of other defense clients reveals the operational and technical hurdles that any AI deployment in defense must overcome. The key lies in balancing AI assistance with robust human oversight and infrastructure resilience.

Moving forward, evaluating AI models like Claude requires rigorous, real-world testing and clearly defined criteria—only then can teams discern if the benefits outweigh the risks in critical defense applications.

By applying a concise evaluation framework, military operators and defense contractors can rapidly decide whether Claude or alternative solutions fit their demanding operational contexts.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us