The rise of large language models has brought immense capabilities but also complexity that challenges even seasoned AI practitioners. Understanding how these models make decisions is crucial for the AI safety community, researchers, and developers who want to ensure reliability and reduce risks.

With the release of Gemma Scope 2, open interpretability tools have been extended across the entire Gemma 3 family. This article will help you grasp what Gemma Scope 2 really is, how it functions in practice, debunk some common myths, and when it’s best to use these tools.

What Is Gemma Scope 2 and Why Does It Matter?

Gemma Scope 2 is a toolkit designed to provide detailed insights into language model behavior. It offers open, transparent interpretability, which means you can explore how language models like those in the Gemma 3 family produce outputs, step-by-step, rather than relying on black-box assumptions.

Interpretability tools help you understand the internal dynamics of models that work with complex language patterns. For AI safety, this matters because it allows the identification of unexpected behaviors or failure modes—critical when deploying AI systems in real-world scenarios.

How Does Gemma Scope 2 Actually Work?

At its core, Gemma Scope 2 extends the ability to inspect model internals such as attention mechanisms, token activations, and representations across any model within the Gemma 3 family. These models are transformer-based language models, which process text input sequentially using layers of attention and feed-forward calculations.

The toolkit lets you visualize and analyze:

- Attention patterns: How different words or tokens in the input influence each other during processing.

- Activation traces: The internal signals fired by neurons in the model as it generates output.

- Layer-wise behaviors: How different layers contribute uniquely to the final prediction.

By breaking down the complex network into interpretable components, you can detect unusual model behaviors or biases.

Technical term explained: Attention in transformers is a mechanism that allows the model to weigh the importance of different words in context. Think of it as the model deciding, "Which parts of the sentence should I focus on to understand the meaning best?"

What Are Common Misconceptions About Interpretability Tools Like Gemma Scope 2?

Many believe that interpretability tools provide clear, definitive answers about why a model made a particular decision. This is rarely the case. Gemma Scope 2, like all interpretability frameworks, offers hypotheses and insights rather than absolute truths. Interpreting neural networks remains an evolving field with inherent ambiguities.

Another misconception is that using such tools guarantees safety or model correctness. While they help identify risks and behaviors, they do not replace thorough testing and validation.

Additionally, some expect interpretability tools to be plug-and-play regardless of context. In reality, understanding model behavior with these tools requires thoughtful analysis and domain knowledge.

When Should You Use Gemma Scope 2?

If you're working within the Gemma 3 model family, Gemma Scope 2 is useful for:

- Deeply troubleshooting unexpected model outputs.

- Investigating model bias or failure modes.

- Researching novel model architectures or behaviors.

- Building AI safety protocols that depend on clear interpretability.

However, when NOT to use Gemma Scope 2 is equally important:

- If you need quick, high-level summaries rather than detailed internals—there may be simpler analytics tools better suited.

- When deploying lightweight models that lack complex internals or attention mechanisms for analysis.

- If your AI safety research requires statistical or user-centric evaluations rather than neuron- or layer-level interpretability.

How Can You Start Exploring Gemma Scope 2 Yourself?

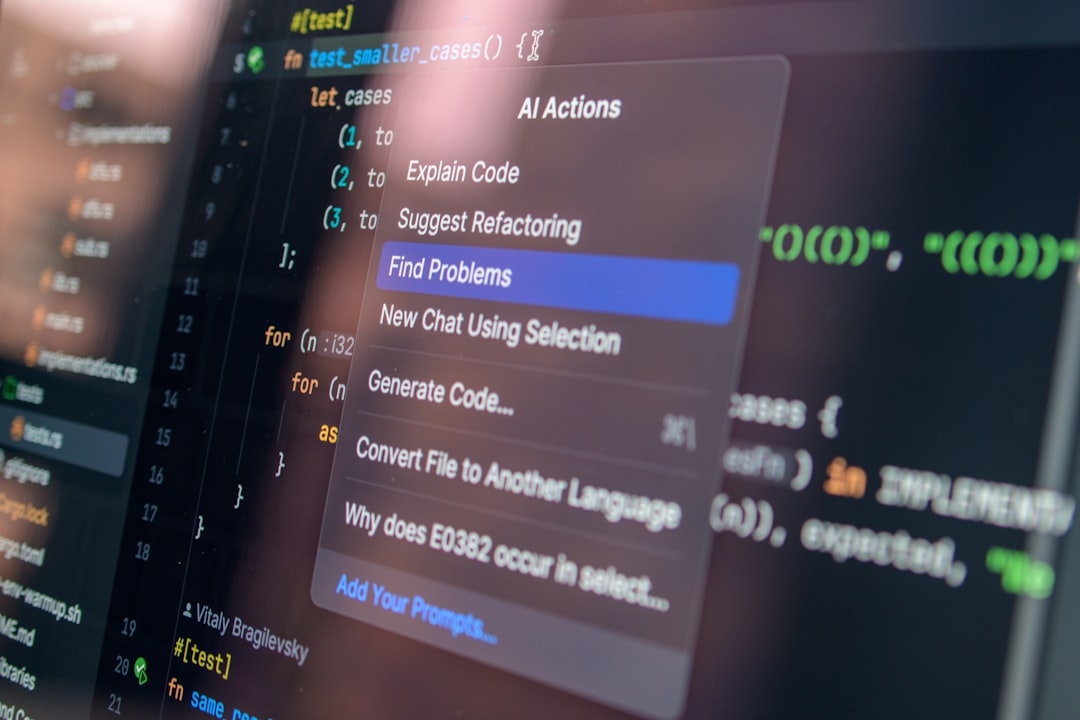

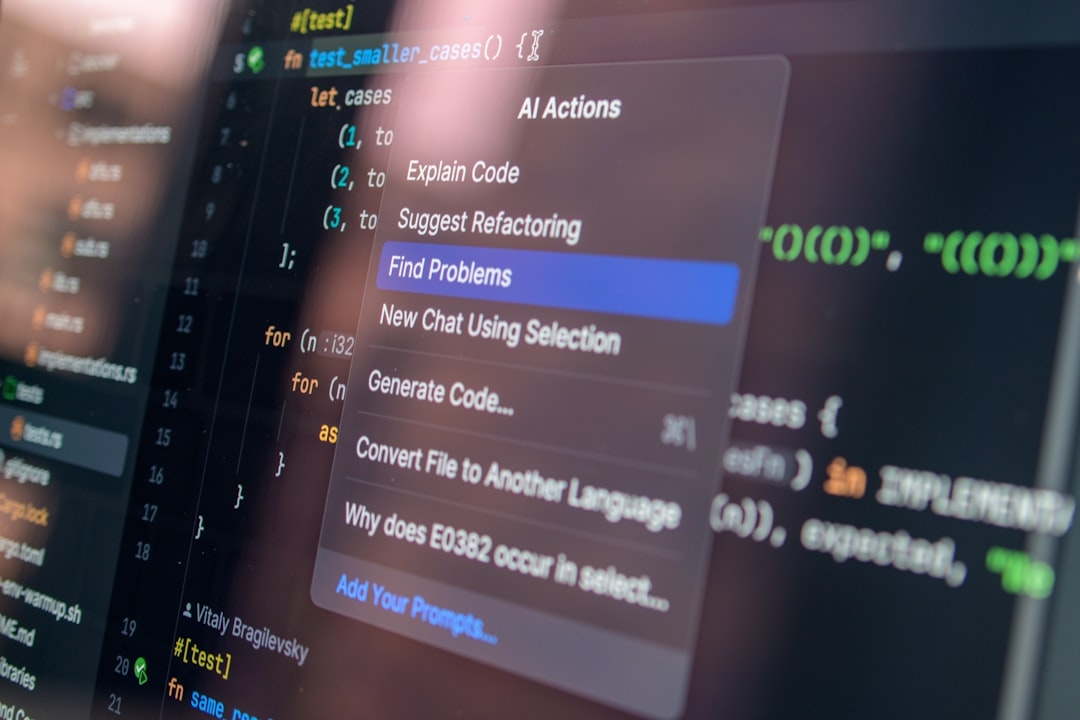

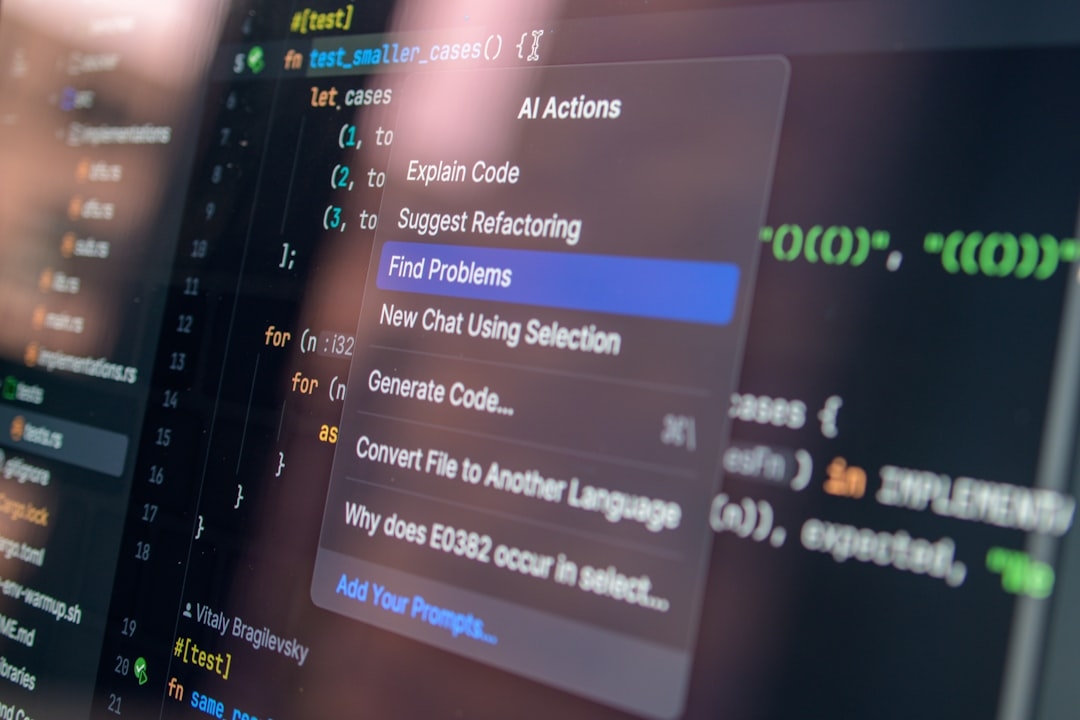

Exploring Gemma Scope 2 requires some setup, typically using Python and Jupyter notebooks or a similar environment. Once you have access, you can begin by loading a model from the Gemma 3 family and running sample inputs through the interpretability interface.

Start with inspecting attention maps for simple sentences to see which tokens influence each other. Then, gradually examine activation patterns in different layers to identify how the model transforms input step-by-step.

This practical exploration reveals that model interpretability is nuanced. Often, what you thought was a straightforward relationship turns out more complex, underscoring the value of such tools.

What Trade-Offs Should You Keep in Mind?

Interpretability tools like Gemma Scope 2 come with trade-offs. They provide deep insights but require expertise and time to analyze results correctly—misinterpretation is a risk. Additionally, analyzing very large models can demand significant computational resources.

While the open nature of Gemma Scope 2 promotes transparency, it also means findings must be handled with caution. Keep in mind that interpretability is a tool—one part of a larger safety and validation ecosystem.

Concrete Next Step: A Hands-On Experiment to Understand Gemma Scope 2

Take 20-30 minutes today to explore how attention patterns work in a Gemma 3 model instance:

- Load a short sentence (around 10 tokens) through the Gemma Scope 2 interface.

- Visualize the attention map for each layer.

- Note which tokens influence others and consider whether these links make sense semantically.

- Try changing one word and observe how attention patterns shift.

This quick experiment will reveal the dynamic nature of attention and give you concrete experience with interpretability concepts, helping you better understand AI model behaviors.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us