In recent developments surrounding AI technology and intellectual property rights, David Greene, a longtime host of NPR's "Morning Edition," has taken legal action against Google. Greene alleges that the distinctive male podcast voice used in Google's AI-powered NotebookLM tool is based on his own voice, used without authorization.

This case is significant amid growing concerns on how large tech companies utilize personal data and likenesses to train and deploy AI voice technologies. The lawsuit highlights the ongoing tension between advancing AI tools and protecting individuals' rights over their unique voices.

What Is NotebookLM and Why Does Its Voice Matter?

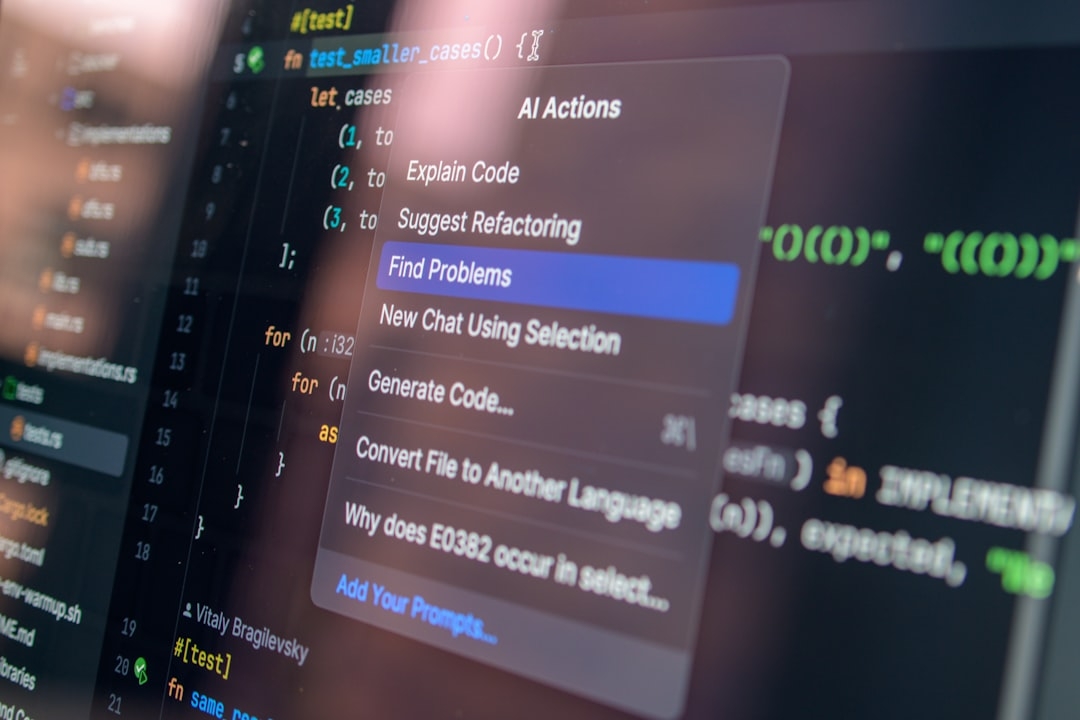

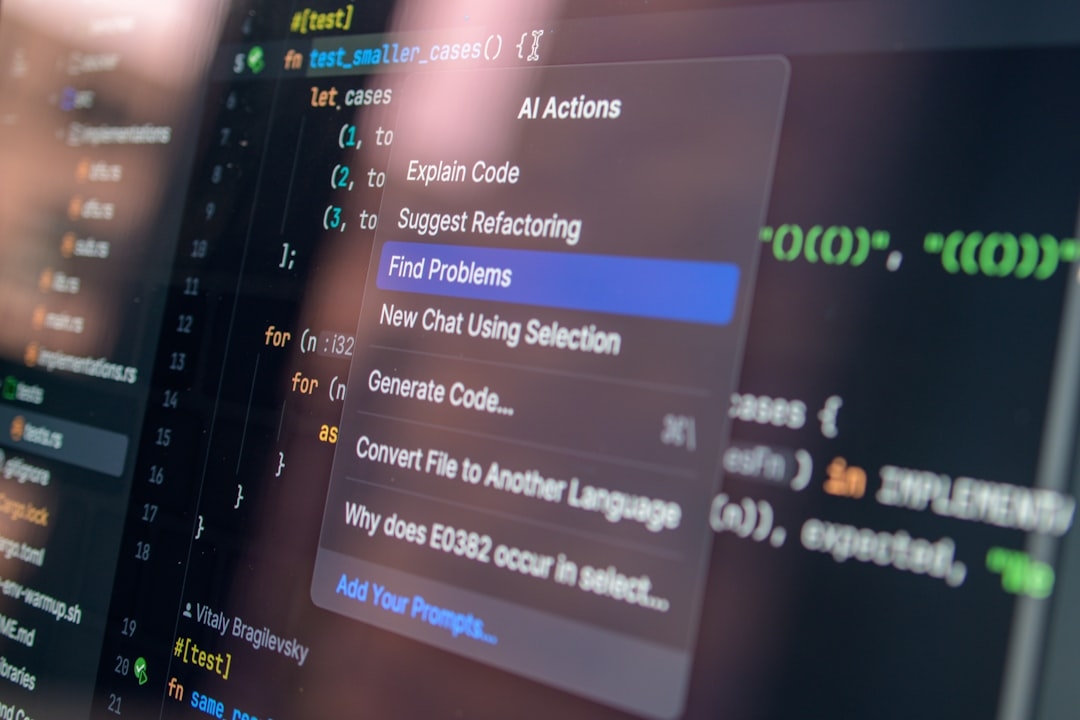

NotebookLM is an AI-powered tool developed by Google, designed to assist users by summarizing and interacting with their notes through natural language. One of its features includes generating spoken responses using synthesized voices that mimic human speech patterns.

The voice in question is a male podcast-style voice used for narration within the NotebookLM tool, characterized by its engaging, clear tone—a hallmark of David Greene's recognizable NPR hosting style. Voice synthesis technology leverages large datasets of audio to generate these natural-sounding voices.

How Does Voice Synthesis Work and Why Is It Controversial?

Voice synthesis involves training AI models on recorded audio samples to replicate the tone, pitch, rhythm, and intonation of a person’s voice. These models require substantial voice data, often scraped or licensed, to create realistic voice emulations. As AI voice tools become commercially viable, questions arise about consent and compensation.

Intellectual property rights in voice synthesis remain a murky area legally. The controversy centers on whether companies can use a person's voice recordings to build AI without explicit permission. This lawsuit is among the first high-profile cases bringing these issues under judicial scrutiny.

What Is David Greene’s Claim Against Google?

According to the lawsuit, Google used recordings of Greene’s voice without authorization to create the male podcast voice in NotebookLM. Greene argues that Google’s AI voice is a clear imitation of his unique vocal characteristics, which constitute his personal intellectual property.

The implications of this claim extend beyond just one voice. If proven, this case could force AI companies to undergo more stringent permissions or licensing processes when sourcing voice data. It also points to broader concerns about how AI tools might appropriate human features.

Why Has This Case Sparked Debate in the AI Community?

This lawsuit prompts crucial questions:

- Can voice or likeness be owned legally in AI-generated content?

- What level of consent is necessary for training AI on personal media?

- How should tech firms balance AI innovation with respecting personal rights?

Voice actors, entertainers, and content creators are watching closely. They recognize the potential of AI to replace or mimic their performances without sufficient control or benefit.

How Does This Affect Users and Developers of AI Tools Like NotebookLM?

Users might face ethical dilemmas when employing AI voices, especially if those voices resemble real individuals. Developers need to consider:

- Obtaining proper licenses for voice data

- Informing users about voice source origins

- Implementing safeguards against unauthorized voice cloning

Balancing convenience and ethical use becomes a real challenge when deploying voice AI in consumer products.

What Are The Limitations and Challenges of Legally Defining Voice Ownership?

Legal frameworks currently lack clear definitions for protecting voices from unauthorized AI replication. Voices differ from traditional recordings because AI can generate new, synthetic utterances that did not occur in original recordings.

The courts need to clarify:

- If voice patterns can be considered intellectual property

- Whether AI-generated voices infringe on personality rights

- How damages might be measured in such cases

Without this clarity, AI voice technology remains in a legal grey zone that leaves both rights holders and AI developers uncertain.

What Can Companies Learn from This Situation?

Companies developing AI tools must proceed cautiously. Overlooking consent can result in costly lawsuits and loss of trust. Proactively addressing voice rights through clear policies and licensing agreements is a crucial step forward.

Meanwhile, the public and legal systems are still catching up to the speed of AI innovation. Cases like Greene’s ensure these issues receive formal attention.

How Should Individuals Evaluate AI Voice Tools in Their Work or Business?

Before adopting AI-generated voice services, consider:

- Where the voice data originates and if it’s licensed correctly

- Potential legal exposure for unauthorized voice use

- The ethical impact of replicating a voice without consent

- Whether the tool offers transparency on voice generation process

Evaluating these criteria can help minimize risks and maintain integrity.

Key Takeaways

The David Greene lawsuit spotlights a critical issue at the crossroads of technology and personal rights. AI voice synthesis offers exciting applications but requires thoughtful handling of ownership and consent. Companies must be more transparent and obtain explicit permission when using identifiable vocal characteristics from real people.

For users, verifying the source and legality of AI voices is essential. Without clear policies, tools that rely on synthetic voices risk infringing on personal rights and damaging reputations.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us