In today’s fast-evolving world of software engineering assessments, accurately measuring coding capabilities is critical. The SWE-bench Verified benchmark has been widely used to evaluate cutting-edge coding models and their progress. However, recent analyses and firsthand experiences have revealed significant flaws in the SWE-bench Verified system.

These issues undermine its effectiveness and raise the question: How can we trust SWE-bench Verified scores when the benchmark itself may be contaminated or misleading?

What Is SWE-bench Verified and Why Does It Matter?

SWE-bench Verified is a benchmarking suite designed to measure the coding skills of AI models and software engineers alike. It offers a structured set of programming tasks aimed at testing a model's ability to write, understand, and debug code at the frontier of software development capabilities.

Benchmarks like SWE-bench Verified influence decisions on model development, hiring, and research priorities, making their accuracy vital. If the benchmark inaccurately reflects the true skill level, it could promote overconfidence or misguide investments.

How Does SWE-bench Verified Actually Work?

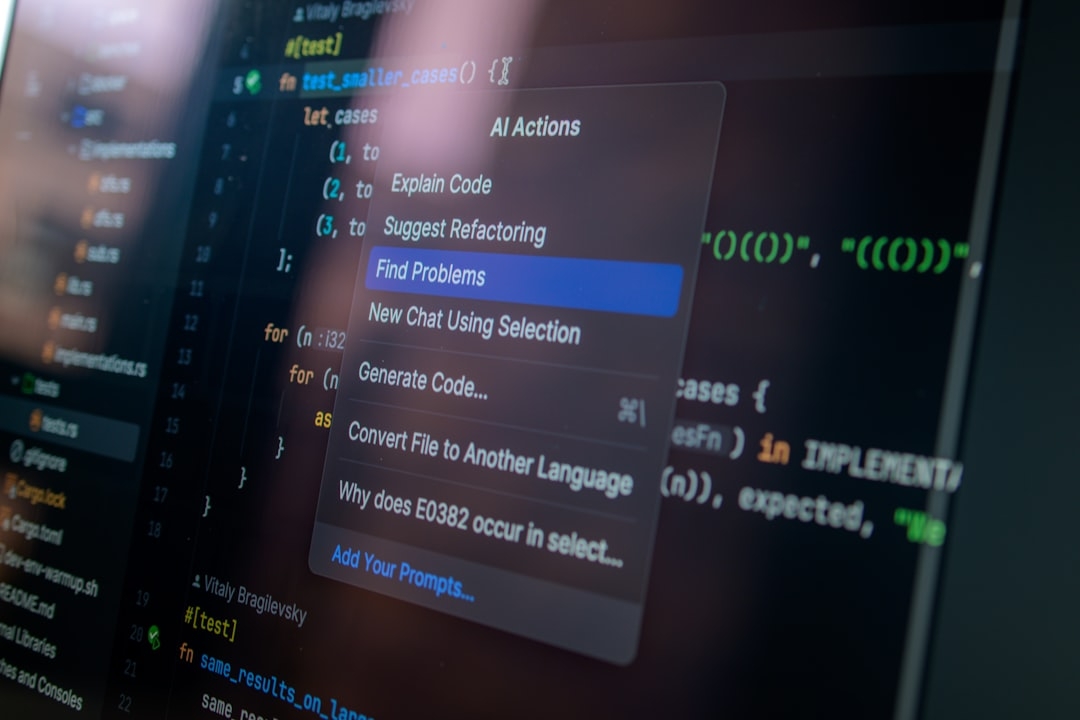

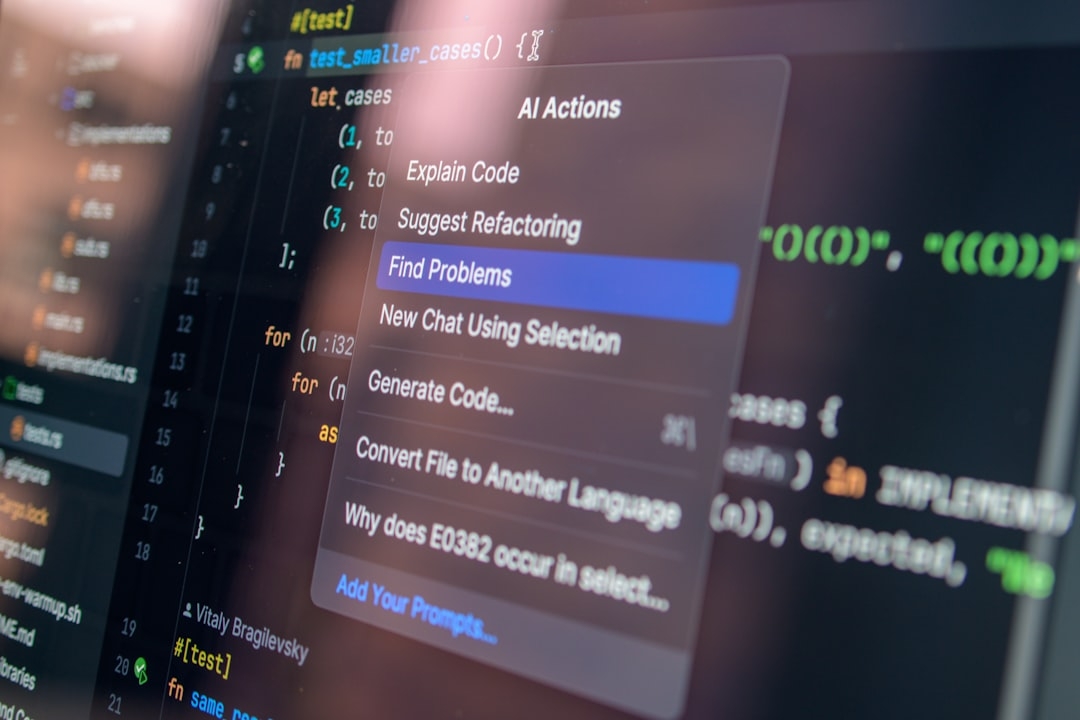

The benchmark employs a variety of programming challenges that models attempt to solve. Each task typically requires generating or completing code snippets, adhering to specified requirements, and passing automated tests verifying correctness.

Key to its method is scoring based on passing test cases and adherence to problem constraints. The processes underlying SWE-bench Verified include:

- Automated test execution to validate generated code

- Task difficulty grading for ranking results

- Comparison across models over time to track coding progress

Despite appearing robust, we found systematic problems with test contamination and training data leakage that skew evaluation results.

What is training data leakage?

Training data leakage happens when benchmark test cases or their solutions inadvertently appear in the AI model’s training dataset, allowing it to memorize answers rather than demonstrate problem-solving ability. This inflates performance scores unfairly.

Why Is SWE-bench Verified Increasingly Contaminated?

Ongoing exposure of SWE-bench Verified problems in publicly available datasets has led to models encountering these problems during training. This contamination means models often memorize answers instead of genuinely solving problems.

Flawed tests — including insufficiently challenging tasks and simplistic validation methods — contribute to this issue. They fail to distinguish between memorized code and adaptive coding skills, making the benchmark an unreliable indicator in the current landscape.

Common Mistakes When Using SWE-bench Verified

Many teams make the critical error of interpreting SWE-bench Verified scores at face value. These include:

- Overestimating coding progress: Interpreting high scores as breakthroughs, while they may reflect training contamination.

- Neglecting test robustness: Ignoring the simplicity or obsolescence of test cases that fail to represent real-world challenges.

- Assuming uniform difficulty: Treating all benchmark tasks as equally representative of frontier coding problems.

These mistakes lead to misguided confidence and strategy decisions in model development.

When Should You Use SWE-bench Verified or Consider Alternatives?

Given these concerns, SWE-bench Verified may still offer value in controlled settings where contamination is minimized, or for initial diagnostic purposes. However, for accurate, contamination-resistant benchmarking, we recommend SWE-bench Pro.

SWE-bench Pro introduces more rigorous test curation, protects against training data leakage, and incorporates advanced validation approaches to better measure true coding ability.

How to Detect If Your Benchmark Results Are Contaminated?

One key indicator is unusually rapid and large improvements in benchmark scores with minimal model changes. Additionally, manually checking if benchmark tasks or their solutions appear in training data sources can flag contamination.

Regularly refreshing benchmark datasets, adding unseen problem variants, and cross-validating results with independent tests also help prevent false positive progress signals.

Expert Insights on SWE-bench Verification Flaws

Our direct experience running SWE-bench Verified on multiple model iterations revealed those inflated results. Models seemed to solve tasks effortlessly, despite showing weaker performance in practical coding scenarios.

This discrepancy highlights the gap between benchmark results and real-world coding skill, underscoring the need for more reliable measurements.

Next Steps: Experiment to Verify Benchmark Integrity Yourself

To better understand these issues firsthand, we encourage you to audit your benchmark in a simple experiment:

- Select a random sample of SWE-bench Verified test problems.

- Search publicly available training datasets for identical or very similar solutions.

- Compare your model’s performance on these sampled tasks versus unseen, similar difficulty problems.

- Evaluate if large performance gaps exist — this indicates data leakage or contamination.

This 10-30 minute test offers a practical way to verify whether your use of SWE-bench Verified reflects genuine coding ability or memorization artifacts.

In summary, the increasing contamination and flawed test designs in SWE-bench Verified question its continued reliability for frontier coding evaluation. Transitioning to more robust alternatives like SWE-bench Pro will better support honest assessment and meaningful progress measurement.

We hope this clearer understanding helps the community refine their evaluation methods and drive better AI coding advancements.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us