Many people believe that AI advancements are all about increasing raw intelligence. While intelligence is important, Google’s Cloud AI shows it’s not the only factor that matters. In reality, AI models today must excel in three critical areas simultaneously: raw intelligence, response time, and extensibility.

These three frontiers define how useful AI is in practical applications. Understanding them helps set realistic expectations and guides users towards smarter adoption of AI technologies.

What Are the Three Frontiers of AI Model Capability?

Google’s Cloud AI pushes the limits on three frontiers:

- Raw Intelligence: This refers to the model’s ability to understand, generate, and reason with knowledge. Essentially, how smart the AI is at a fundamental level.

- Response Time: How quickly the AI can process input and generate output. In many real-world scenarios, speed is essential, especially for interactive applications.

- Extensibility: The third frontier involves how easily the AI can be adapted or extended to specific tasks, domains, or user needs without retraining from scratch. It reflects the model's flexibility and practicality.

Extensibility might be less known but is critical for businesses and developers who want to customize AI solutions efficiently while maintaining high performance.

Why Is Raw Intelligence Alone Not Enough?

Many people expect AI models to simply get smarter and solve all problems effortlessly. However, intelligence without speed and adaptability limits usefulness.

For example, a large language model might give very detailed answers but take several seconds or even minutes to respond. This delay is unacceptable for applications like customer support chatbots or real-time analytics.

Similarly, a highly intelligent AI that cannot be tailored to specific industries or tasks might not deliver value beyond general tasks, leading to wasted resources.

How Does Google’s Cloud AI Balance These Frontiers?

Google's Cloud AI offerings demonstrate a practical balance, showcasing strengths across these dimensions:

- High Intelligence: Leveraging vast datasets and advanced architectures, Google's models understand nuances in language, images, and data.

- Fast Response Times: Cloud infrastructure optimizations and model serving techniques ensure minimal latency, crucial for live interactions.

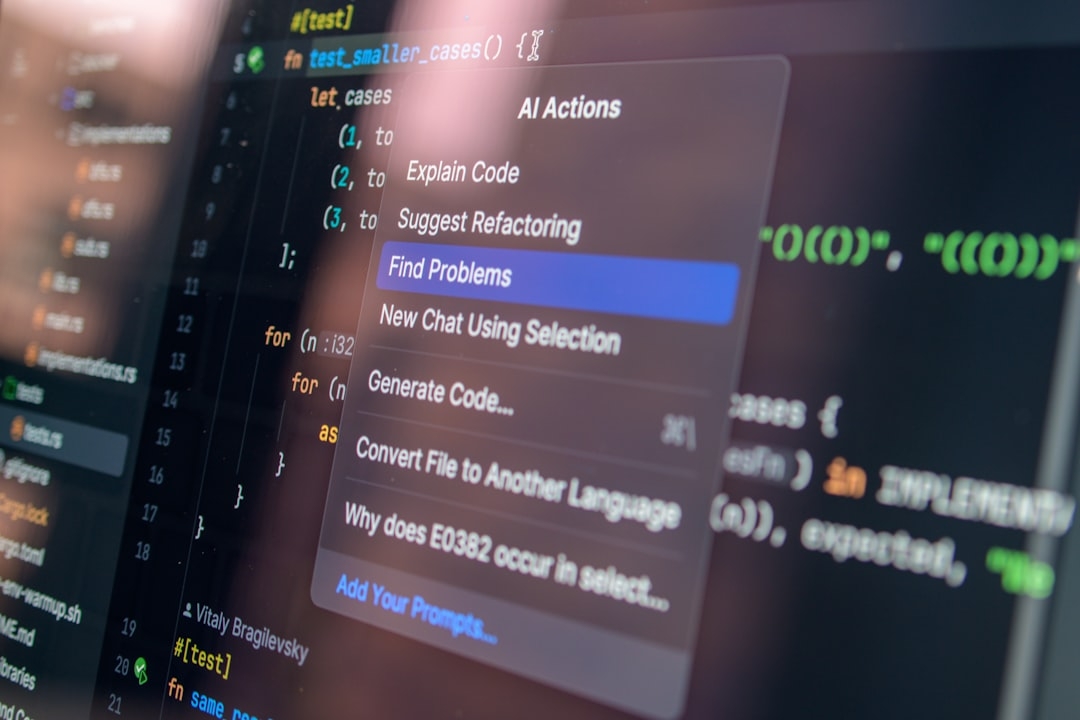

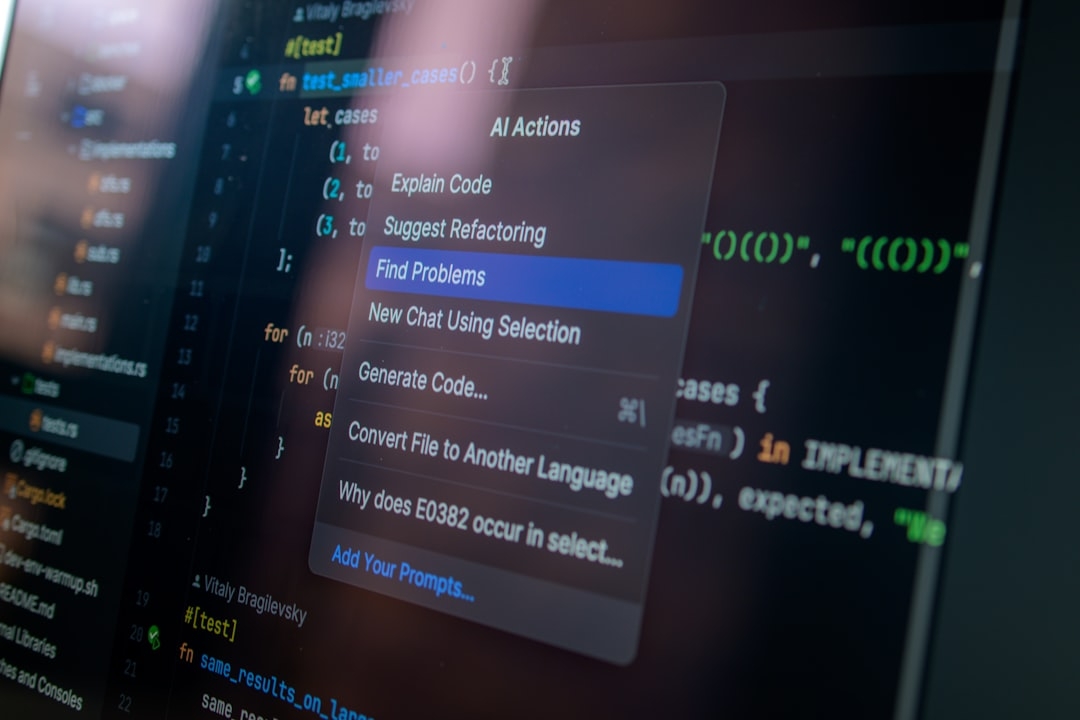

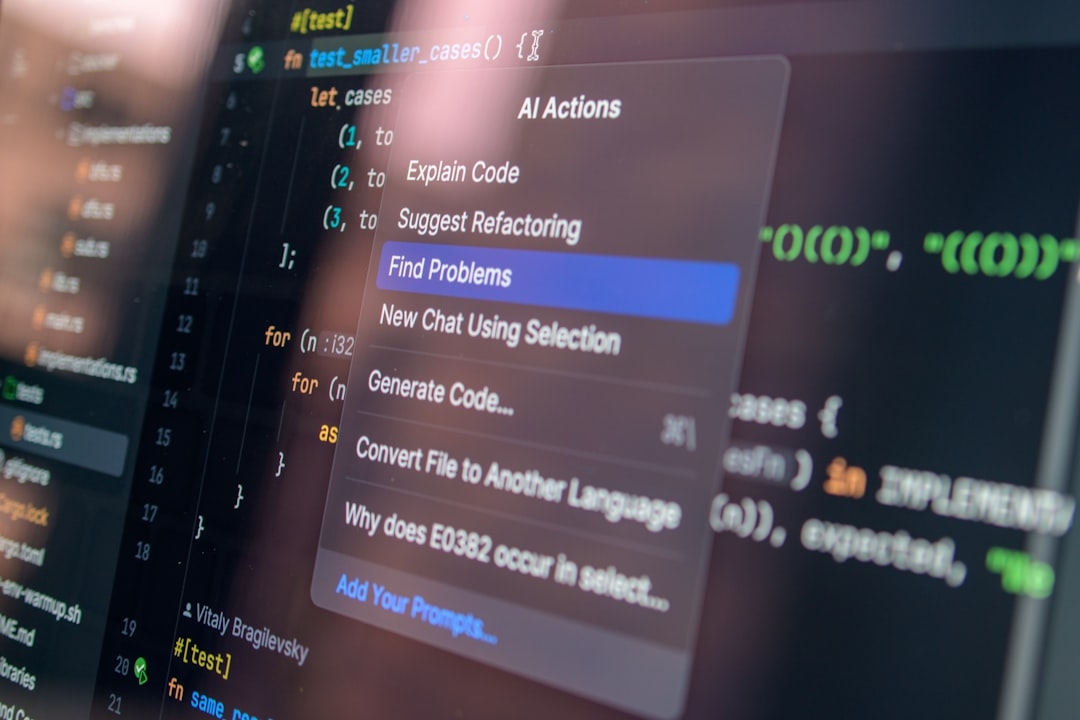

- Extensibility: Tools like customization APIs allow users to fine-tune or augment AI behavior suited to unique needs, often without full retraining.

This balance allows companies to deploy AI effectively, combining quality, speed, and customization.

When Should You Use Google’s Cloud AI for Your Projects?

If your project requires fast, intelligent AI responses and needs to be customized to a domain or workflow, Google’s Cloud AI is a strong candidate. For instance:

- Customer Service: Chatbots that answer complex user queries quickly while adapting to company-specific knowledge bases.

- Content Generation: Producing tailored marketing copy or code snippets that vary by style and domain demands.

- Data Analysis: Summarizing and interpreting vast amounts of data in near real-time.

In these cases, the blend of intelligence, speed, and extensibility supports practical outcomes that meet business expectations.

Where Does Google’s Cloud AI Fall Short?

Despite strong performance, there are trade-offs and limitations to consider:

- Cost and Complexity: High-quality, fast AI at scale involves substantial cloud infrastructure costs and technical expertise.

- Not Fully Open-Ended: Extensibility has bounds—deep customization might still require significant effort or specialized knowledge.

- Latency Limits: While fast, some complex tasks inherently demand more compute time, causing delays that may impact user experience.

Understanding these boundaries helps manage expectations and guides better architectural decisions.

Are There Alternatives to Google’s Cloud AI for These Frontiers?

Several AI providers focus differently across the three frontiers:

- Raw Intelligence Focus: Some open-source models prioritize cutting-edge capabilities but may suffer in speed and ease of customization.

- Response Time Emphasis: Lightweight models run locally offer instant responses but with reduced intelligence.

- Extensibility Options: Certain platforms specialize in rapid fine-tuning but may not match Google's intelligence or speed.

Choosing the best AI depends on your project's priorities: raw power, speed, or flexibility.

How Can You Test Google’s Cloud AI Frontiers Yourself?

A valuable experiment is to deploy a small Google AI-powered chatbot or content generator:

- Create a simple AI chatbot that answers questions related to your industry.

- Measure response time by recording delays during interactions.

- Customize the bot with your own knowledge or vocabulary using available APIs.

- Assess the quality of answers versus general AI models.

This hands-on test reveals strengths and areas for improvement firsthand.

What Common Misconceptions Should Be Avoided?

Don’t assume AI is magic. No AI currently excels perfectly on all fronts. Balancing intelligence, speed, and extensibility means trade-offs.

Beware of one-dimensional benchmarks. An AI that scores well only on accuracy but is slow or inflexible might not serve real users effectively.

Customization is not always plug-and-play. Extensibility requires effort and likely some technical insight.

Approaching AI with clear expectations on these frontiers leads to smarter investments.

Final Thoughts on Google’s Cloud AI Capabilities

Google’s Cloud AI shows how modern AI must advance simultaneously on multiple fronts to be truly useful. Its strength lies in combining strong intelligence, low-latency response, and flexible customization.

While not without drawbacks, this three-front approach offers a balanced toolkit that suits many real-world applications better than models focusing on a single attribute.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us