One common misconception in AI is that bigger models always mean better performance. However, Spanish startup Multiverse Computing challenges this idea with its latest release: the HyperNova 60B. This new AI model version, now available for free on Hugging Face, claims to outperform competing models, including those from well-known developers like Mistral, despite being a compressed variant.

Understanding what makes HyperNova 60B special requires looking at compression’s role in AI modeling. Compression techniques reduce model size without significantly sacrificing performance, enabling faster deployment and lower resource costs. This approach is essential for making large-scale AI more accessible and efficient in real-world applications.

What Is HyperNova 60B and How Does It Work?

HyperNova 60B is a large language model developed by Multiverse Computing, a startup from Spain often referred to as a 'soonicorn'—a rapidly growing private company in the AI space. The number 60B refers to its size, implying 60 billion parameters, which are the internal settings the model learns during training.

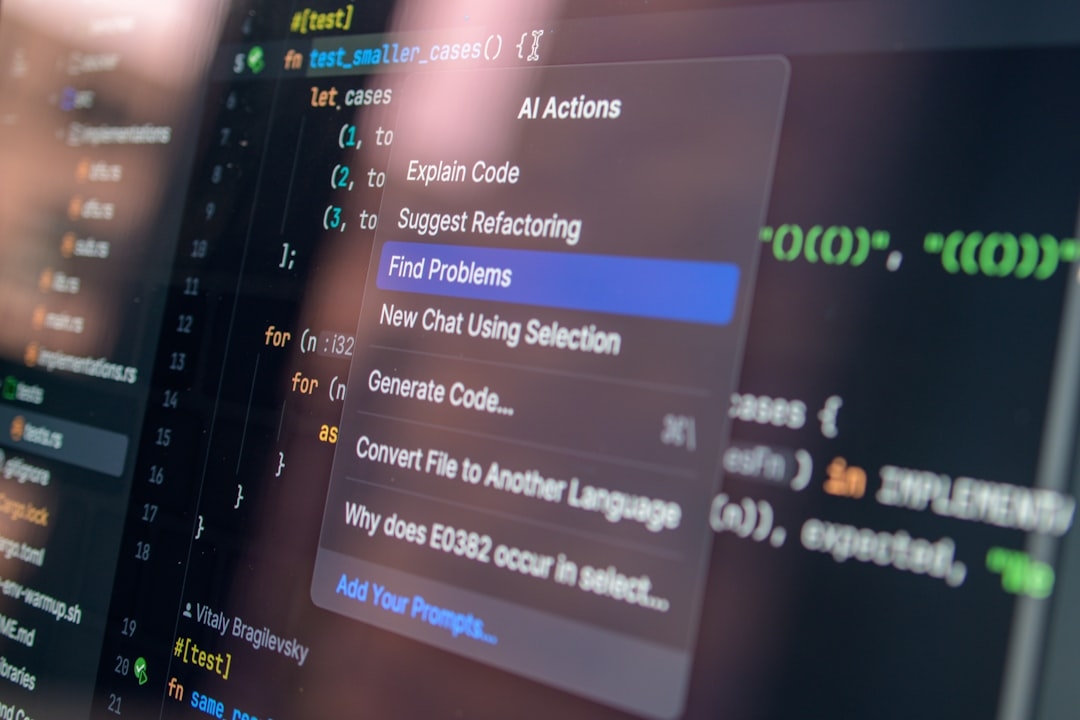

What sets the new version apart is its compression. By leveraging advanced compression techniques, Multiverse Computing has reduced the file size and required computational resources for running the model without a meaningful drop in accuracy or speed. This means developers and businesses can deploy strong AI models on less powerful hardware.

Compression in AI often involves trimming redundant information, merging weights, or quantizing values to fewer bits. While these techniques risk degrading the model’s performance, Multiverse Computing’s HyperNova 60B reportedly maintains robustness, surpassing Mistral’s uncompressed models in benchmarks shared publicly.

Why Does Model Compression Matter in AI?

AI models like HyperNova 60B can be huge and costly to run. Running a 60-billion-parameter model requires significant processing power, usually only affordable for large tech firms with dedicated infrastructure.

Compression helps solve this by:

- Reducing Hardware Requirements: Smaller model sizes mean less memory and fewer computations during inference.

- Speeding Up Deployment: Compressed models load faster, enabling quicker testing and scaling.

- Lowering Energy Consumption: Efficient models reduce power use, crucial for sustainable AI.

Multiverse Computing’s decision to release such a compressed model for free on Hugging Face opens new doors for startups and researchers who otherwise couldn't afford high-end hardware.

How Does HyperNova 60B Compare to Mistral’s Model?

Mistral is known for releasing efficient open-weight models, making it a notable point of comparison. According to Multiverse Computing, HyperNova 60B’s compression techniques enable it to outperform Mistral’s similarly sized models on various tasks, like language understanding and text generation.

This is a significant claim considering the competitive landscape of AI models widely used for everything from chatbots to content creation. The fact that a compressed model edges out uncompressed competitors challenges the assumption that compression means compromise.

When Should You Consider Using Compressed AI Models Like HyperNova 60B?

Compressed models are not always the default choice, especially if you have massive compute resources. However, several scenarios benefit from them:

- Limited Hardware Availability: Deploy on edge devices, laptops, or modest servers.

- Rapid Prototyping: Faster download and load times help test ideas quickly.

- Cost-Effectiveness: Reduce cloud compute billing by using less-demanding models.

- Energy Efficiency: Useful for applications sensitive to carbon footprint.

Common Mistakes When Deploying Compressed AI Models

Using compressed AI models brings its own challenges. Some frequent mistakes include:

- Assuming Compression Always Degrades Quality: Not all compression methods cause significant performance drops; some, like those used by Multiverse Computing, preserve quality.

- Skipping Thorough Evaluation: Testing compressed models only on benchmarks can miss real-world weaknesses.

- Ignoring Suitability For Tasks: Some models perform better on specific tasks; assuming one-size-fits-all can lead to poor results.

Being aware of these pitfalls helps users maximize the benefits of models like HyperNova 60B.

How Can You Start Using HyperNova 60B Today?

Since the model is freely available on Hugging Face, you can download and test it without cost. Start by comparing it to your existing models to see how its compressed size impacts speed and accuracy in your specific use case.

Integration typically involves evaluating:

- Performance on your dataset

- Resource consumption during inference

- Latency and throughput in your deployment environment

These factors help decide if HyperNova 60B fits your application needs better than larger, uncompressed models.

Practical Steps to Implement

- Visit the model’s page on Hugging Face.

- Download or use the provided API endpoints.

- Run benchmark tests tailored to your tasks.

- Analyze resource use and performance trade-offs.

- Iterate based on findings, possibly integrating hybrid approaches combining compressed and uncompressed models.

What Are the Trade-Offs of Using HyperNova 60B?

It’s vital to recognize that no model is perfect. While compression reduces size and improves speed, there might be subtle compromises on edge-case data. However, for many typical applications, the trade-off is minimal and worthwhile.

Deciding factors usually include the importance of speed versus precision and the hardware environment. Multiverse Computing’s model demonstrates that with the right approach, compression technology can deliver near-uncompromised performance.

This development pushes the AI community to rethink priorities between scale and efficiency.

What Are Hybrid Solutions in AI Modeling?

Sometimes combining compressed and uncompressed models provides the best balance. For example, a compressed model handles most queries efficiently, while a heavier uncompressed model tackles complex or critical tasks.

This layered setup optimizes resource usage without sacrificing quality where it counts. Exploring such hybrid architectures could be a practical next step after testing HyperNova 60B.

Common Mistakes in Hybrid Approaches

- Failing to properly route requests to the right model.

- Neglecting to monitor latency impacts from switching models.

- Overcomplicating deployment resulting in increased maintenance costs.

Get Hands-On: Your 20-Minute Setup Task

To leverage what you’ve learned, download HyperNova 60B from Hugging Face and run a quick comparison with your current AI model. Focus on measuring inference speed and response quality using a small sample dataset you regularly use.

This practical exercise will uncover whether compressed models like HyperNova 60B fit your needs, saving future development time and costs.

By understanding Multiverse Computing’s approach, you challenge conventional wisdom, potentially opening doors to a more efficient AI-powered future.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us