In the world of AI chatbots, visibility often shapes user adoption as much as technical capabilities do. This became evident when Anthropic's chatbot Claude unexpectedly rose to the No.1 spot on the App Store, spurred largely by the media buzz surrounding the company’s tense negotiations with the Pentagon.

As someone closely following AI trends and testing emerging chatbot products, I witnessed Claude's rapid ascent firsthand. The situation offers valuable lessons on user perception, market dynamics, and the influence of public controversies on software adoption.

Why Did Claude Rise to No.1? Exploring the Pentagon Dispute Impact

The connection between Claude’s App Store success and Anthropic’s dispute with the Pentagon became a case study in how controversy can magnify attention. The company had been negotiating a multi-million dollar contract with the U.S. Department of Defense to develop AI technologies, but talks fell through amid concerns about ethical oversight and deployment risks.

This high-profile disagreement thrust Anthropic into the spotlight, causing both industry watchers and the public to become curious about Claude’s capabilities. The ensuing downloads arguably reflect a mix of genuine interest and a desire to evaluate an AI system entangled in national security debates.

What Makes Claude Stand Out Among AI Chatbots?

Claude is designed as a generative AI chatbot that can handle detailed conversations, generate creative content, and assist with complex tasks. Built by Anthropic, a company focused heavily on AI safety, Claude incorporates safeguards meant to reduce biases and prevent harmful outputs—critical considerations in AI development.

Compared to popular alternatives, Claude often shines in discussions requiring thoughtful, nuanced responses. Testimonials highlight its ability to maintain context over long chats and its cautious approach when handling sensitive topics.

Technical Aspects of Claude’s Architecture

While the exact architecture remains proprietary, Claude utilizes advanced large language model techniques, similar in principle to GPT architectures. These models analyze vast textual data to generate coherent responses. The term generative AI here refers to AI systems capable of producing human-like text outputs from prompts.

Anthropic’s emphasis on AI ethics and safety is embedded through mechanisms like reinforcement learning from human feedback and rule-based filters, which aim to mitigate misinformation and toxicity risk.

How Does the Pentagon Dispute Affect Claude’s Credibility?

Publicly, the Pentagon dispute raised questions about whether an AI like Claude can be trusted for sensitive or high-stakes applications. The defense sector’s caution underscores AI’s dual-use nature: capable of great assistance but also potential misuse.

This event inadvertently became a stress test for Claude's image. While some critics interpreted the fallout as a sign of unpreparedness, others saw Anthropic’s principled stance as a positive sign that ethical considerations remain a priority over lucrative contracts.

Where Claude Performs Well

- In-depth conversational AI use cases that require context retention and nuance

- Applications prioritizing safety and ethical guardrails

- Creative writing and brainstorming support for individuals and teams

Where Claude Faces Challenges

- Scaling applications requiring real-time responses for enterprise

- Handling specialized technical domains without domain-specific fine-tuning

- Overcoming public skepticism due to negative media attention

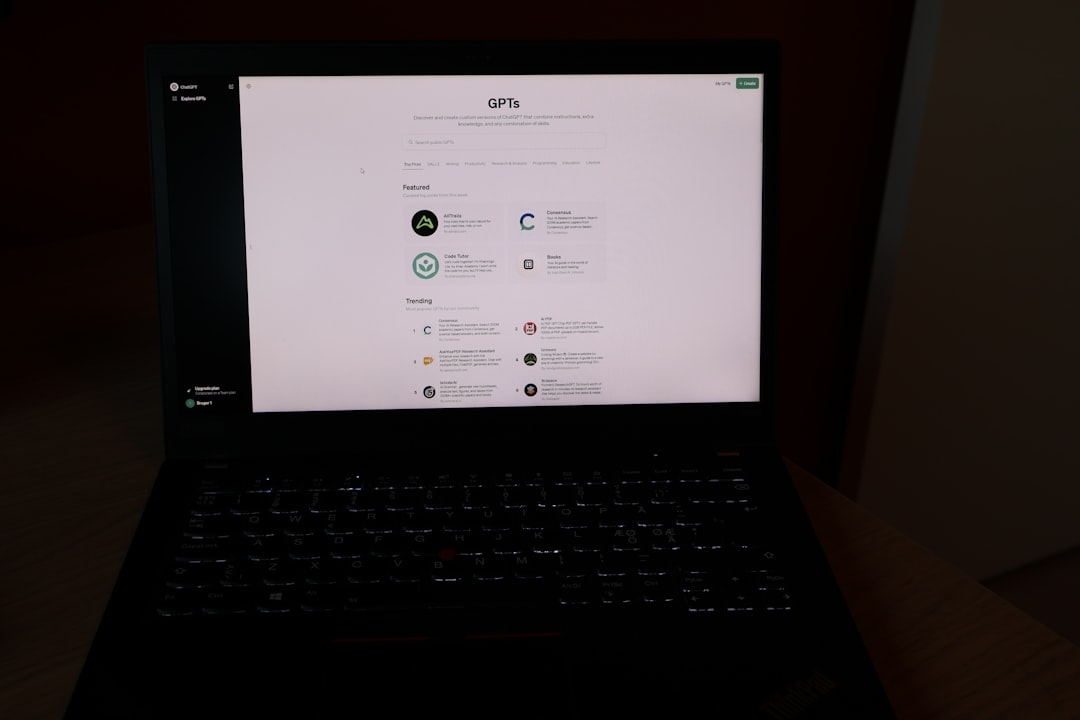

What Are the Alternatives to Claude?

For users evaluating chatbot options after Claude’s rise, alternatives like OpenAI’s ChatGPT, Google Bard, and Microsoft’s Bing AI offer competitive features. Each system has strengths and trade-offs related to response style, data privacy, and adaptability.

Choosing the right AI tool ultimately depends on specific needs such as integration capabilities, cost considerations, and tolerance for content risk.

Why Should You Care About This Story?

The Claude-Pentagon saga reveals that AI product success goes beyond pure technology. Public perception, ethical positioning, and contextual relevance drive adoption as much as capabilities do.

For developers and users alike, navigating the hype around AI requires balancing excitement with realism, recognizing that AI tools are evolving and imperfect.

Next Steps: Testing Claude Yourself

If you want to see what makes Claude tick, try engaging it with complex questions and observe how it handles sensitive or ambiguous topics. Pay attention to how it manages conversational context over multiple exchanges.

This kind of hands-on evaluation takes around 20-30 minutes and provides a grounded understanding beyond headlines.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us