Many assume that creating 3D scenes from simple language commands is just about combining existing objects in space. However, RoboLayout shows us that the process can be much more nuanced and intelligent, using advanced differentiable methods to generate 3D layouts for embodied agents.

This is critical because embodied AI agents—robots or virtual characters—need to understand and navigate real-world environments seamlessly. Generating spatial contexts from open-ended language instructions isn’t trivial; it requires reasoning about object placements, interactions, and the physical layout of scenes.

What Exactly Is RoboLayout and How Does It Work?

RoboLayout is a framework that uses recent advances in vision language models (VLMs) to interpret natural language commands and produce differentiable 3D scene layouts. Differentiable means the system can adjust its outputs incrementally to improve accuracy by backpropagating errors—similar to how neural networks learn.

This approach allows RoboLayout to generate spatially coherent scenes, considering not just object identities, but their relationships and positions in 3D. Unlike older methods that relied on static templates or rigid rules, RoboLayout adapts to open-ended instructions.

It particularly shines in enabling embodied agents—robots or virtual avatars that act within environments—to understand where objects should go, how spaces should be arranged, or how a room might look following instructions.

Where Does RoboLayout Excel?

- Spatial Reasoning: RoboLayout uses VLMs to understand spatial language cues such as “next to,” “behind,” or “on top of,” making scene layouts more natural and accurate.

- Flexibility: It handles open-ended, diverse instructions rather than fixed tasks, which is crucial for real-world applications.

- Differentiable Design: Its gradient-based learning helps it fine-tune scene generation effectively without manual tweaking.

- 3D Scene Layout Generation: Produces realistic spatial arrangements that embodied agents can utilize for navigation and interaction.

When Should You NOT Use RoboLayout?

Despite its innovations, RoboLayout is not a silver bullet for all 3D scene generation needs. It may struggle in:

- Highly Complex or Dynamic Scenes: Scenes with extreme complexity or rapidly changing objects may exceed its current spatial understanding capacities.

- Non-Spatial Instructions: Instructions focusing on object behaviors rather than placements are outside RoboLayout’s primary focus.

- Resource-Limited Environments: Because of its reliance on VLMs and differentiable computation, it can require significant computational power, making it less suitable for low-power devices.

How Does RoboLayout Compare to Traditional Scene Generation Techniques?

Older techniques often relied on rule-based systems or fixed templates, which limited the diversity of scenes or required extensive manual setup. RoboLayout’s use of differentiable models allows continuous improvement and adaptation, leading to more natural and realistic environments.

However, these gains come with complexity and computational costs. Some alternative solutions might use heuristic methods or simulation engines tailored for specific environments but lack RoboLayout’s flexibility.

What Are the Practical Steps to Implement RoboLayout?

To effectively use RoboLayout, you should:

- Gather diverse language instruction datasets related to your application domain.

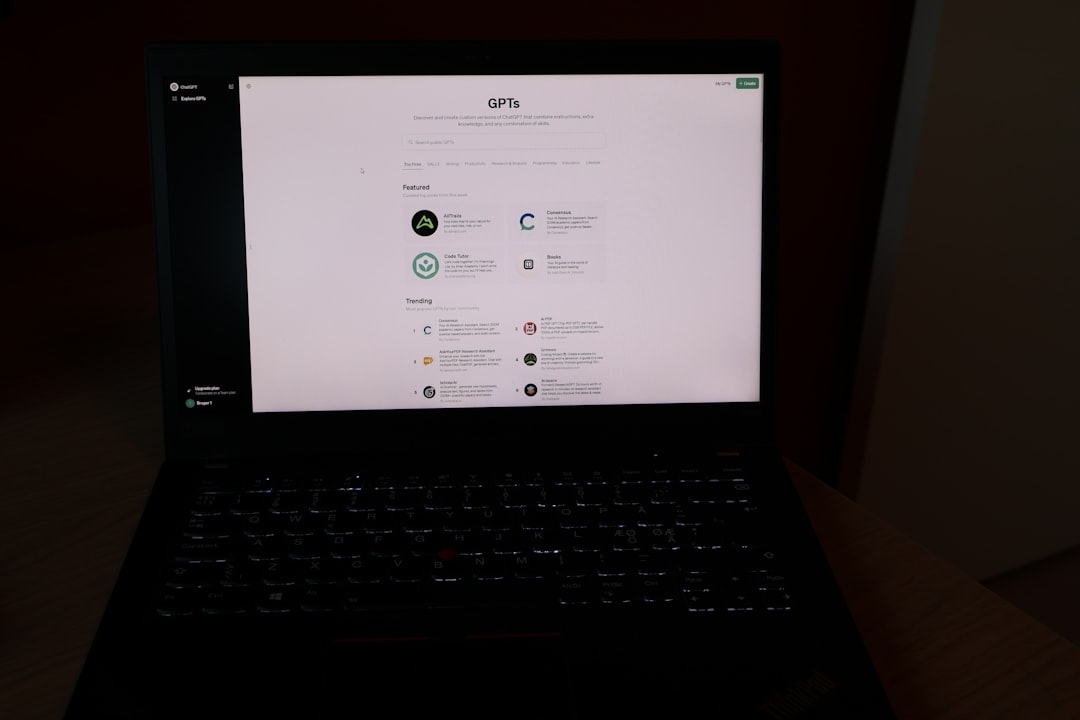

- Leverage pretrained vision language models capable of spatial reasoning.

- Set up differentiable scene generation pipelines to iteratively improve layout outputs.

- Validate results with embodied agents in simulation before real-world deployment.

When Should You Use RoboLayout?

If your project requires intelligent interpretation of natural language to generate 3D environments—such as in robotics, gaming, or AR/VR applications—RoboLayout offers valuable advantages over rigid or rule-based approaches.

Just ensure your infrastructure supports the computational demands, and your scenes don’t demand excessively complex or dynamic object behaviors currently outside RoboLayout’s scope.

What’s the Final Takeaway on RoboLayout?

RoboLayout marks a significant step forward by bridging natural language and 3D spatial understanding in embodied agents. It respects complex spatial relationships while learning and evolving its scene layouts via differentiable operations.

For AI developers and researchers, it’s a promising tool that blends language, vision, and spatial learning—pushing embodied AI closer to fluency in human-like environmental understanding.

Next Steps: Try a 20-30 Minute Implementation Debugging Task

Pick a simple spoken instruction like “Put the chair next to the table.” Use a pretrained vision language model to translate this into a basic 3D layout representation. Try tuning the output positions and see how small adjustments (differentiable tweaks) improve spatial coherence. This hands-on practice clarifies RoboLayout’s core strengths and challenges in real-world tasks.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us