The Journey to Real-Time AI Coding

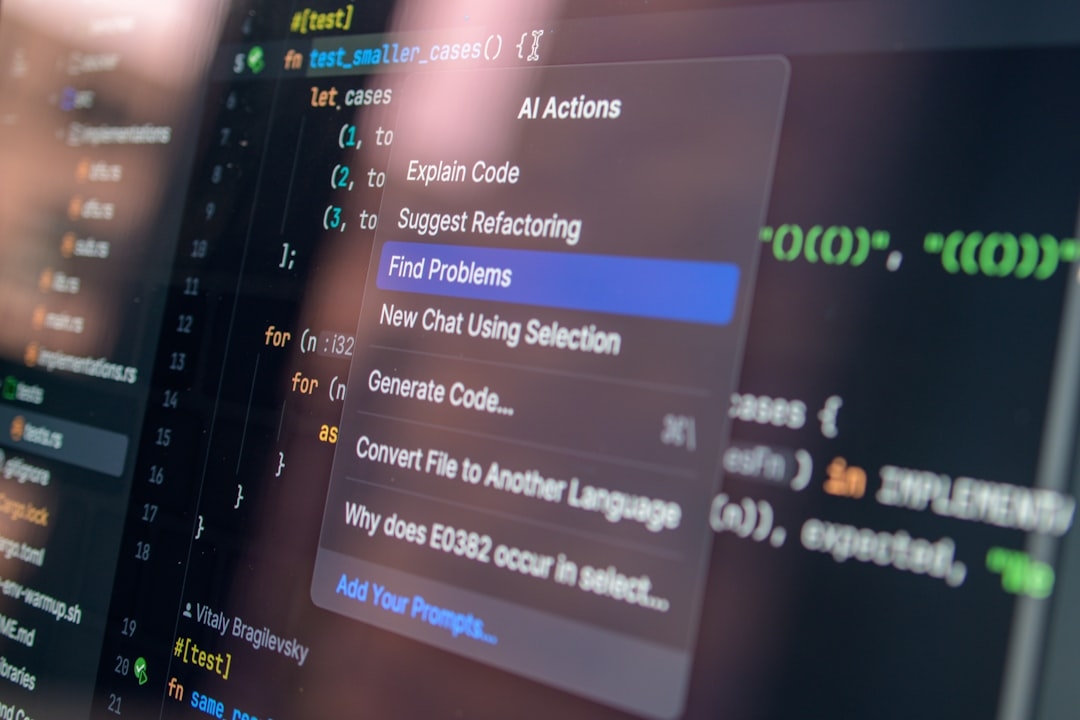

Traditional AI coding assistants, while powerful, have often struggled with speed and context limits. Developers frequently face delays trying to generate or complete code, breaking their workflow and creativity. The launch of GPT-5.3-Codex-Spark challenges the status quo by promising a major leap: real-time code generation with vastly expanded context capabilities.

This model is not just an incremental update. By boosting generation speeds by 15 times and enabling a massive 128,000 token context window, GPT-5.3-Codex-Spark aims to mirror human coding flow more closely than ever before. As part of a research preview, it’s currently available to ChatGPT Pro users, inviting early adopters to test its capabilities in real project environments.

How does GPT-5.3-Codex-Spark work?

At its core, GPT-5.3-Codex-Spark is a deep learning-based language model specifically optimized for coding tasks. The 128k context window means it can consider the equivalent of roughly 64,000 words in a single pass—enough to analyze entire codebases or large modules without truncation. This contrasts sharply with older models limited to a few thousand tokens, which forced developers to split code or lose context.

Speed improvements stem from architectural optimizations and more efficient use of GPU resources. The result is nearly instantaneous code generation output, enabling an interactive experience akin to pair programming with a human collaborator.

When should you use GPT-5.3-Codex-Spark over other models?

This model shines in scenarios demanding high responsiveness and deep context understanding. For example, complex debugging sessions, large-scale refactoring, or writing extensive functions benefit immensely. The ability to keep everything “in mind” reduces the cognitive load on programmers.

However, it might be overkill for small snippets or straightforward tasks where simpler Codex variants suffice. If you’re working with tight compute budgets or latency-sensitive environments, the resource demands of GPT-5.3-Codex-Spark may present practical constraints.

What failed and why in earlier AI coding iterations?

Earlier AI coding assistants often faltered due to:

- Limited context windows: Losing track of large code structures forced fragmented coding and repeated explanations.

- Slow response times: Interruptions in developer workflow reduced AI assistance effectiveness.

- Poor adaptability: Inflexibility in understanding project-specific code patterns hindered usefulness.

Attempts to simply scale token count without architectural improvements led to exponential runtime increases and inefficiencies. Speed boosts without maintaining or expanding context led to shallow, inaccurate outputs.

What finally worked with GPT-5.3-Codex-Spark?

The breakthrough lies in balancing the huge context window with a radically faster generation technique. This is powered by innovations in transformer optimization and memory management. Developers now enjoy a fluid coding co-pilot that understands extensive project context while responding instantly.

Additionally, real-time generation enables uninterrupted coding sessions resembling having a seasoned programmer beside you, spotting issues, suggesting improvements, and generating code snippets seamlessly.

Practical Considerations

Before adopting GPT-5.3-Codex-Spark, consider:

- Compute costs: Larger models require more GPU power, which may raise expenses.

- Subscription availability: Currently limited to ChatGPT Pro users in research preview.

- Learning curve: Integrating a real-time assistant changes workflows and requires adjustment.

- Reliability: As a research preview, occasional glitches and limitations may arise.

Key Takeaways for Developers

GPT-5.3-Codex-Spark represents a pivotal advance in AI-assisted coding, making context-heavy, real-time programming support a reality. Its massive 128k token window and speed gains empower more complex, continuous interactions than ever before.

Yet, the model demands higher resources and is best suited for developers tackling sizeable projects or workflows that thrive on immediate feedback. Those with simpler needs may find earlier Codex versions sufficient.

Decision Matrix: Should You Adopt GPT-5.3-Codex-Spark?

Take 20 minutes to assess your coding environment using this checklist:

- Do your projects require understanding of long, interconnected codebases?

- Is real-time code generation critical to your workflow?

- Do you have access to ChatGPT Pro and needed compute resources?

- Are you prepared to adapt your workflow for an AI assistant offering instantaneous support?

If you answered yes to most, GPT-5.3-Codex-Spark could be a game-changer. If not, evaluate if a lighter model meets your needs without added costs or complexity.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us