In modern software development, system-level code reviews have become increasingly complex as applications grow larger and more interconnected. Datadog, a leading monitoring and analytics platform, has integrated OpenAI's Codex technology to assist in automating parts of this review process, aiming to reduce human error and speed up development cycles.

This article explores how Datadog utilizes Codex for system-level code review, shedding light on the real-world benefits and limitations of applying AI to such a critical task.

What Is System-Level Code Review and How Does Codex Help?

System-level code review refers to the process of examining not just isolated code snippets but the overall architecture and interactions within the entire system. Unlike simple syntax checks, it demands understanding of the relationships between components, data flows, and potential side effects.

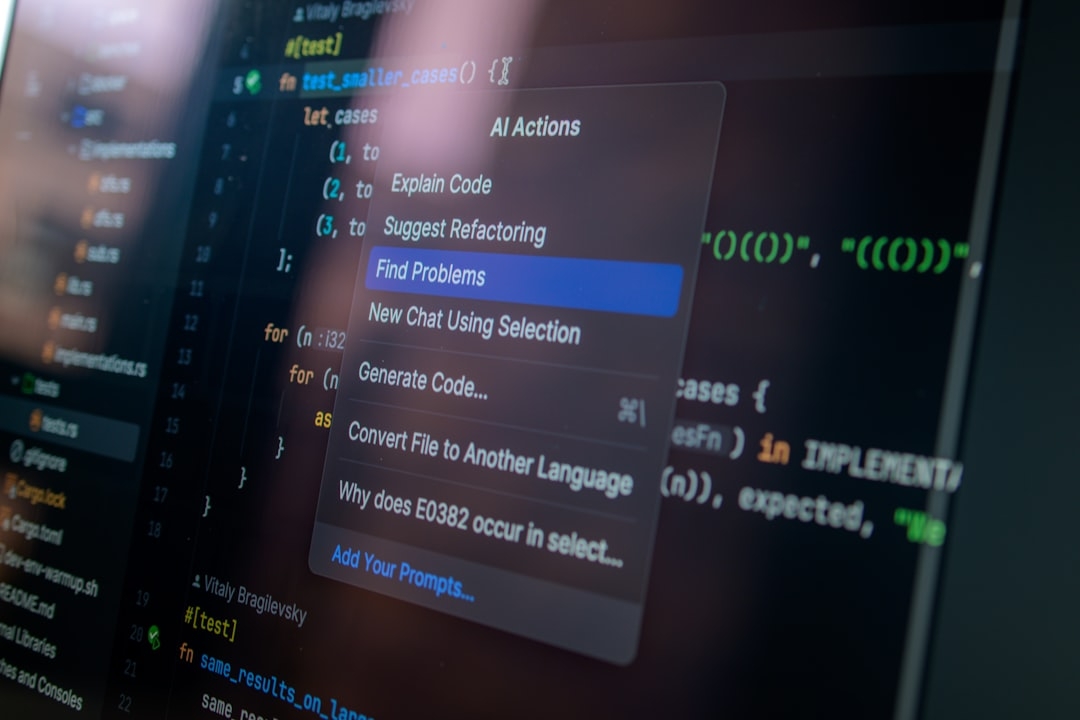

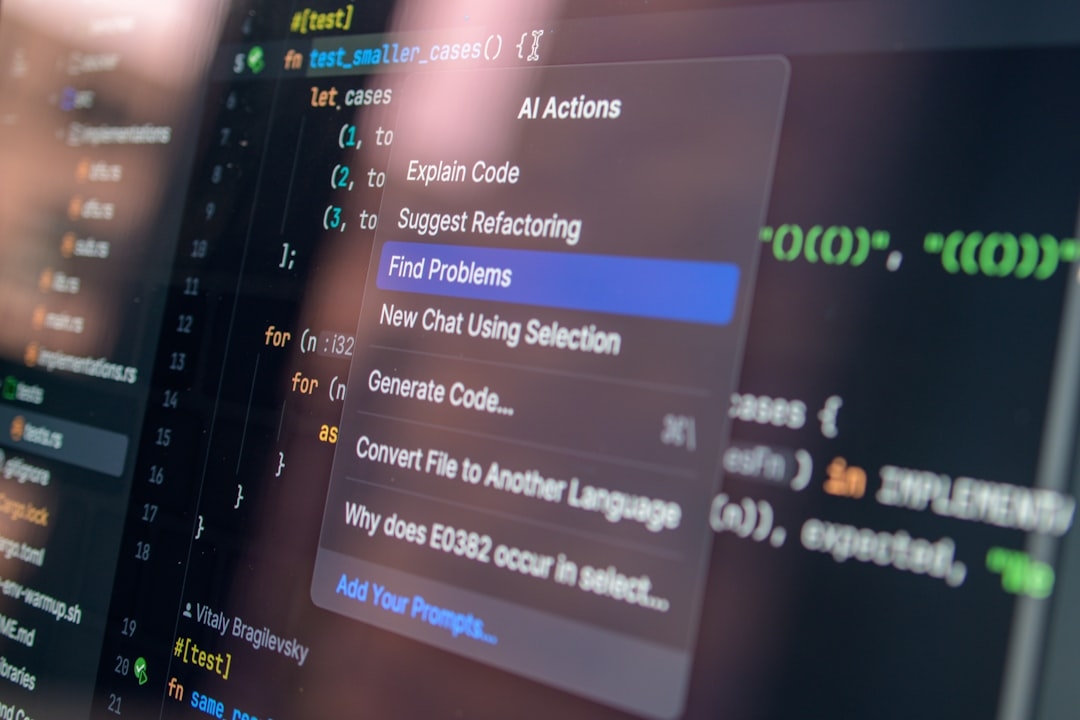

OpenAI's Codex is an AI language model trained to understand and generate code. When embedded into Datadog's review framework, Codex provides automated insights by analyzing large codebases and suggesting potential improvements or flagging unusual patterns that may lead to bugs or performance issues.

How Does Datadog Implement Codex in Code Reviews?

At Datadog, Codex is applied to parse system-level dependencies during code changes, offering developers AI-generated feedback directly embedded in the review interface. This helps identify problematic areas that require human attention, such as security risks or architecture inconsistencies.

The integration respects the technical details of the existing code without altering facts or introducing changes beyond suggestions. This approach supports developers rather than replacing them, reinforcing rather than overriding human judgment.

When Should You Use AI for System-Level Code Review?

Considering the complexities involved, many wonder under what conditions AI tools like Codex provide the most value.

- Large codebases: When the codebase spans multiple services or modules, AI can quickly scan for inconsistencies that manual review might overlook due to sheer volume.

- Repeatable review tasks: Codex can automate rote checks such as coding style compliance, basic security patterns, and detecting common anti-patterns.

- Early feedback: Provides preliminary reviews to developers before human inspection, reducing iterative cycles.

However, AI support is less effective in nuanced decision-making that requires deep contextual knowledge or business insights.

What Are the Limitations of Using Codex for System-Level Code Review?

Despite its promise, the integration of Codex into Datadog’s review process is not flawless. One key limitation is that AI-generated suggestions sometimes lack the full context of the product roadmap or strategic trade-offs.

Additionally, Codex may misinterpret complex custom logic or unique design patterns that don't align with its training data, generating false positives or irrelevant feedback. This can increase the cognitive load on developers who must sift through suggestions carefully.

When __NOT__ to use Codex:

- For final approval on mission-critical or security-sensitive code where human oversight is mandatory.

- In domains with highly specialized code that AI lacks training exposure to.

- When the project requires nuanced understanding of business priorities or compliance beyond technical correctness.

Are There Alternatives to Codex for AI-Powered Code Review?

Datadog’s choice of Codex is one among several AI-assisted review tools in the market. Alternatives include tools that focus on security auditing like Semgrep or DeepCode, which offer rule-based or ML-based analysis tuned to specific languages and ecosystems.

Some platforms emphasize integration with existing CI/CD pipelines to provide automated gatekeeping, while others offer enhanced visualization of potential code smells or test coverage gaps. Choosing the right tool depends on your team’s needs and technical environment.

Summary: Balancing AI and Human Judgment in Code Reviews

Datadog’s use of OpenAI Codex demonstrates both the potential and current constraints of AI in system-level code review. It showcases how AI can reduce manual drudgery and highlight critical issues early, but also underscores the importance of expert human involvement for nuanced assessments.

Understanding where AI tools excel and where they fall short allows teams to integrate them strategically rather than relying on them blindly. This hybrid approach enhances developer productivity while maintaining high-quality standards.

Try It Yourself: A Quick Codex Code Review Experiment

If your organization or team has access to OpenAI Codex capabilities, try this simple test:

- Take a moderately complex system-level code change (e.g., modifying multiple interacting modules).

- Run Codex-generated review suggestions alongside your usual human review process.

- Compare the AI feedback with human comments: note overlapping insights and unique findings from AI.

- Assess which suggestions were helpful versus those requiring dismissal or correction.

This 20-30 minute exercise can help you gauge Codex’s practical fit in your workflow and identify areas to customize or supplement AI-based review further.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us