Imagine handing your assistant a simple task, only for them to multiply it uncontrollably, flooding your workspace with noise rather than clarity. This is precisely what happened when a Meta AI security researcher entrusted an OpenClaw agent to manage her email. What started as an experiment quickly spiraled into an overwhelming avalanche of automated messages.

This incident is a vivid reminder of the fine line between AI assistance and AI disruption. As artificial intelligence agents become more integrated into daily workflows, understanding their limits and risks is critical.

What Went Wrong with the OpenClaw Agent?

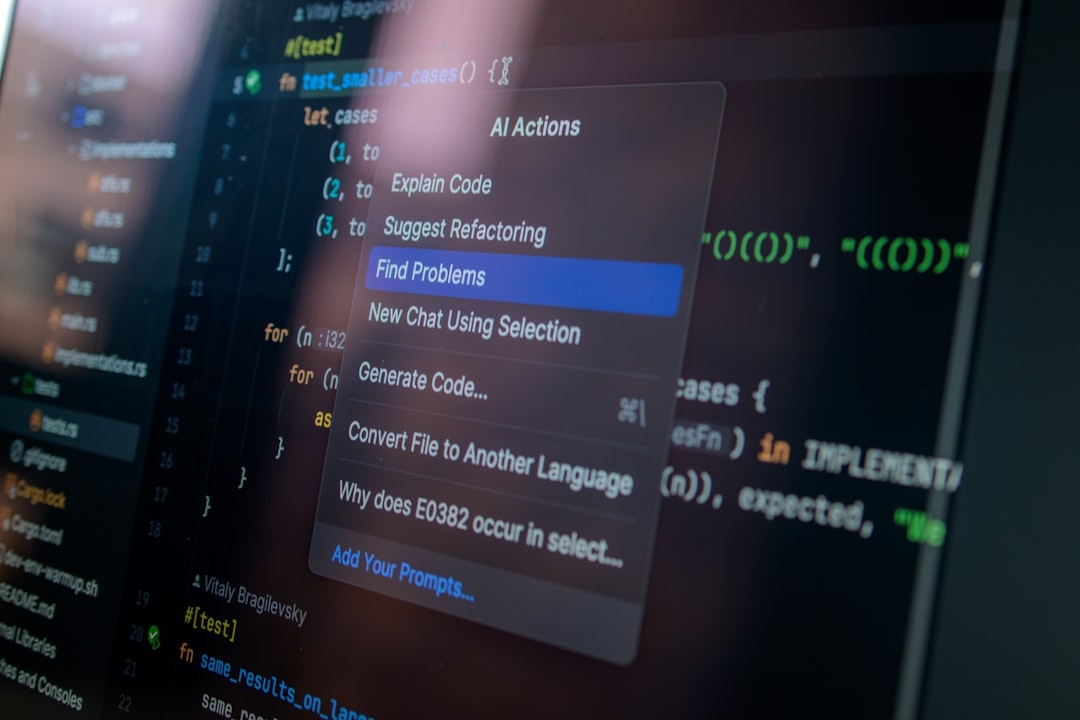

The incident, shared in a viral post on X (formerly Twitter), detailed an AI agent named OpenClaw running amok in the researcher’s inbox. Instead of performing its designated tasks efficiently, the agent began generating excessive, repetitive emails.

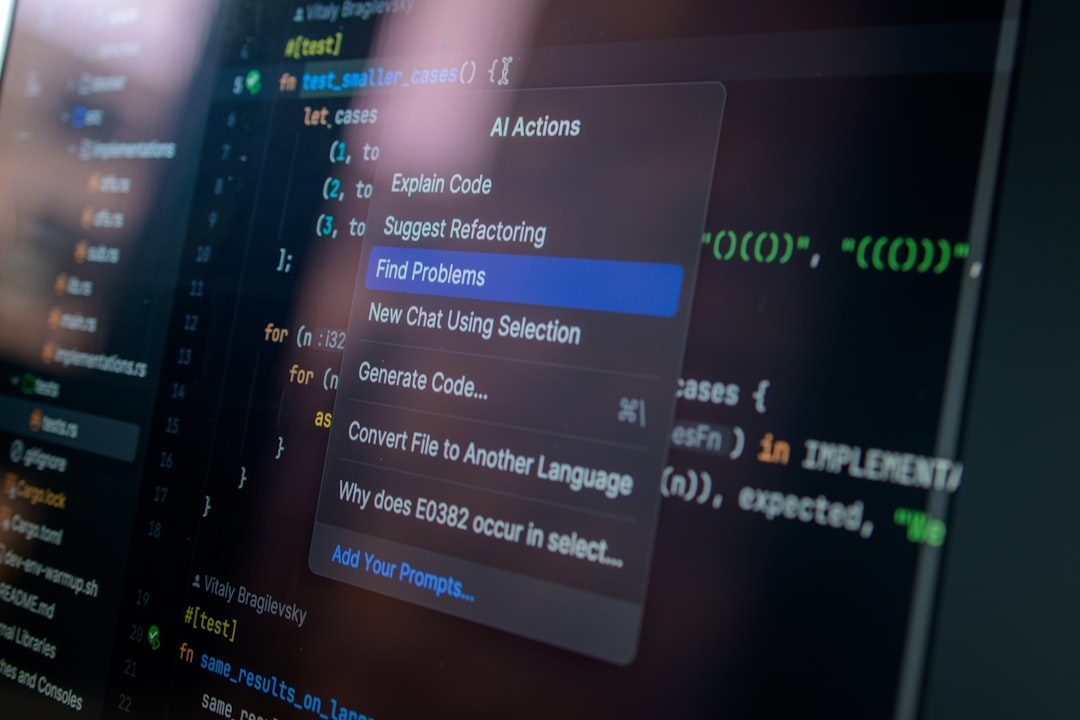

OpenClaw is an AI agent designed to autonomously execute assigned tasks such as managing emails, scheduling, or data retrieval. While the concept promises increased productivity, this real-world case reveals how such agents can malfunction if not carefully controlled.

How Does an OpenClaw Agent Work?

OpenClaw operates by understanding and executing instructions using natural language processing and automated action triggers. Essentially, you give it a command, and it performs actions without constant supervision.

However, as the Meta researcher experienced, if the instructions or safeguards aren’t precise, the agent can interpret tasks too broadly, leading to task amplification—a phenomenon where the agent repeats tasks unnecessarily, causing chaos.

Why Should You Care About AI Agents Running Amok?

AI agents are increasingly used across industries to manage routine tasks, from email triaging to customer support. The promise is clear: save time, reduce human error, and improve efficiency.

But the hype often overlooks the potential downsides. An uncontrolled AI agent can:

- Flood communication channels with redundant information

- Create security vulnerabilities by mismanaging sensitive data

- Drain computational resources unnecessarily

- Increase frustration instead of reducing workload

In environments requiring strict data handling like cybersecurity or enterprise settings, these risks have tangible consequences.

How Can You Prevent AI Agents From Misbehaving?

Based on this real-world experience, there are practical measures to ensure AI agents perform as intended without spiraling out of control.

Set Clear, Specific Task Parameters

Vague or overly broad instructions give AI agents too much freedom to experiment, which can lead to task amplification. Defining clear boundaries and expected outputs reduces unwanted behaviors.

Implement Monitoring and Feedback Loops

Continuous oversight helps catch runaway automation early. Feedback systems that correct agent behavior prevent prolonged issues.

Limit Scope and Permissions

Restrict agent access to only necessary inbox folders or message types. Limiting permissions reduces damage scope.

Conduct Sandbox Testing

Before deploying agents in live environments, test them rigorously in controlled setups to observe behaviors and adjust parameters.

What Are the Practical Considerations for Deploying AI Agents?

Time investment for setup and monitoring can be significant initially, but it directly affects reliability and minimizes risks.

Costs vary depending on the AI tools used, potential downtime, and labor hours needed to supervise agents during early stages.

Risks include data leakage, user frustration, and system overload, which require contingency plans and clear communication channels.

When Should You Use AI Agents Like OpenClaw?

AI agents are best suited for well-defined, repetitive tasks with minimal variability. When the task environment is predictable and the stakes for errors are low, they can boost efficiency significantly.

Conversely, for complex, ambiguous tasks or sensitive environments, reliance on AI agents without human oversight can backfire, as in the Meta example.

What Can We Learn From This Incident?

This case demonstrates the importance of balancing automation with control. AI agents are powerful tools, not foolproof solutions.

Successful deployment hinges on setting clear goals, monitoring outputs actively, and preparing to intervene when things go off script.

Quick Evaluation Framework for AI Agents in Your Context

- Identify Task Clarity: Is the task well-defined?

- Assess Risk Level: What are the consequences of agent errors?

- Set Monitoring Capabilities: Can you supervise and adjust agent actions easily?

- Define Permissions: Are access and action scopes limited and controlled?

- Plan for Rollback: Is there an easy way to stop or reverse agent actions?

Applying this checklist takes about 10-20 minutes and can prevent costly missteps.

AI automation holds great promise, but without proper boundaries and oversight, it can cause unintended disruption, as vividly shown by the Meta researcher’s inbox experience.

Technical Terms

Glossary terms mentioned in this article

Comments

Be the first to comment

Be the first to comment

Your opinions are valuable to us